To use Zero Downtime Migration (ZDM) I must install a Zero Downtime Migration service host. It is the piece of software that will control the entire process of migrating my database into Oracle Cloud Infrastructure (OCI). This post describes the process for ZDM version 21. The requirements are:

- Must be running Oracle Linux 7 or newer.

- 100 GB disk space according to the documentation. I could do with way less – basically there should be a few GBs for the binaries and then space for configuration and log files.

- SSH access (port 22) to each of the database hosts.

- Recommended to install it on a separate server (although technically possible to use one of the database hosts).

Create and configure server

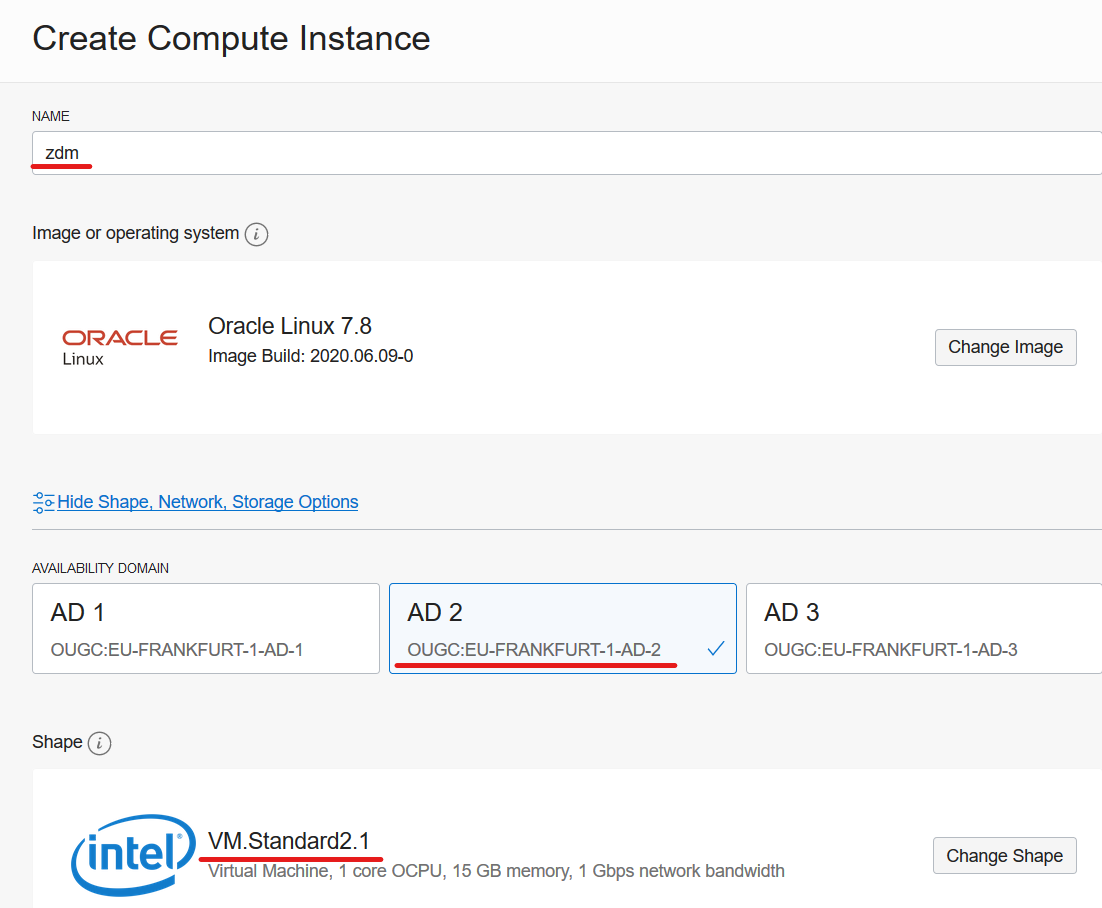

In my example, I will install the ZDM service host on a compute instance in OCI. There are no requirements to CPU nor memory and ZDM is only acting as a coordinator – all the work is done by the database hosts – so I can use the smallest compute shape available. I was inspired to use OCI CLI after reading a blog post by Michał. I will use that approach to create the compute instance. But I could just as well use the web interface or REST APIs.

First, I will define a few variables that you have to change to your needs. DISPLAYNAME is the hostname of my compute instance – and also the name I see in the OCI webpage. AVAILDOM is the availability domain into which the compute instance is created. SHAPE is the compute shape:

DISPLAYNAME=zdm

AVAILDOM=OUGC:EU-FRANKFURT-1-AD-1

SHAPE=VM.Standard2.1

When I create a compute instance using the webpage these are the values:

In addition, I will define the OCID of my compartment, and also the OCID of the subnet that I will use. I am making sure to select a subnet that I can reach via SSH from my own computer. Last, I have the public key file:

COMPARTMENTID="..."

SUBNETID="..."

PUBKEYFILE="/path/to/key-file.pub"

Because I want to use the latest Oracle Linux 7 image I will query for the OCID of that and store it in a variable:

IMAGEID=`oci compute image list \

--compartment-id $COMPARTMENTID \

--operating-system "Oracle Linux" \

--sort-by TIMECREATED \

--query "data[?contains(\"display-name\", 'GPU')==\\\`false\\\` && contains(\"display-name\", 'Oracle-Linux-7')==\\\`true\\\`].{DisplayName:\"display-name\", OCID:\"id\"} | [0]" \

| grep OCID \

| awk -F'[\"|\"]' '{print $4}'`

And now I can create the compute instance:

oci compute instance launch \

--compartment-id $COMPARTMENTID \

--display-name $DISPLAYNAME \

--availability-domain $AVAILDOM \

--subnet-id $SUBNETID \

--image-id $IMAGEID \

--shape $SHAPE \

--ssh-authorized-keys-file $PUBKEYFILE \

--wait-for-state RUNNING

The command will wait until the compute instance is up and running because I used the wait-for-state RUNNING option. Now, I can get the public IP address so I can connect to the instance:

VMID=`oci compute instance list \

--compartment-id $COMPARTMENTID \

--display-name $DISPLAYNAME \

--lifecycle-state RUNNING \

| grep \"id\" \

| awk -F'[\"|\"]' '{print $4}'`

oci compute instance list-vnics \

--instance-id $VMID \

| grep public-ip \

| awk -F'[\"|\"]' '{print $4}'

Prepare Host

The installation process is described in the documentation which you should visit to get the latest changes. Log on to the ZDM service host and install required packages (python36 is needed for OCI CLI):

[root@zdm]$ yum -y install \

glibc-devel \

expect \

unzip \

libaio \

kernel-uek-devel-$(uname -r) \

python36

Create a group (ZDM) and user (ZDMUSER). This is only needed on the ZDM Service Host. You don’t need this user on the database hosts:

[root@zdm]$ groupadd zdm ; useradd -g zdm zdmuser

Make it possible to SSH to the box as zdmuser. I will just reuse the SSH keys from opc:

[root@zdm]$ cp -r /home/opc/.ssh /home/zdmuser/.ssh ; chown -R zdmuser:zdm /home/zdmuser/.ssh

Create directory for Oracle software and change permissions:

[root@zdm]$ mkdir /u01 ; chown zdmuser:zdm /u01

Edit hosts file, and ensure name resolution work to the source host (srchost) and target hosts (tgthost):

[root@zdm]$ echo -e "[ip address] srchost" >> /etc/hosts

[root@zdm]$ echo -e "[ip address] tgthost" >> /etc/hosts

Install And Configure ZDM

Now, to install ZDM I will log on as zdmuser and set the environment in my .bashrc file:

[zdmuser@zdm]$ echo "INVENTORY_LOCATION=/u01/app/oraInventory; export INVENTORY_LOCATION" >> ~/.bashrc

[zdmuser@zdm]$ echo "ORACLE_BASE=/u01/app/oracle; export ORACLE_BASE" >> ~/.bashrc

[zdmuser@zdm]$ echo "ZDM_BASE=\$ORACLE_BASE; export ZDM_BASE" >> ~/.bashrc

[zdmuser@zdm]$ echo "ZDM_HOME=\$ZDM_BASE/zdm21; export ZDM_HOME" >> ~/.bashrc

[zdmuser@zdm]$ echo "ZDM_INSTALL_LOC=/u01/zdm21-inst; export ZDM_INSTALL_LOC" >> ~/.bashrc

[zdmuser@zdm]$ source ~/.bashrc

Create directories

[zdmuser@zdm]$ mkdir -p $ORACLE_BASE $ZDM_BASE $ZDM_HOME $ZDM_INSTALL_LOC

Next, download the ZDM software into $ZDM_INSTALL_LOC.

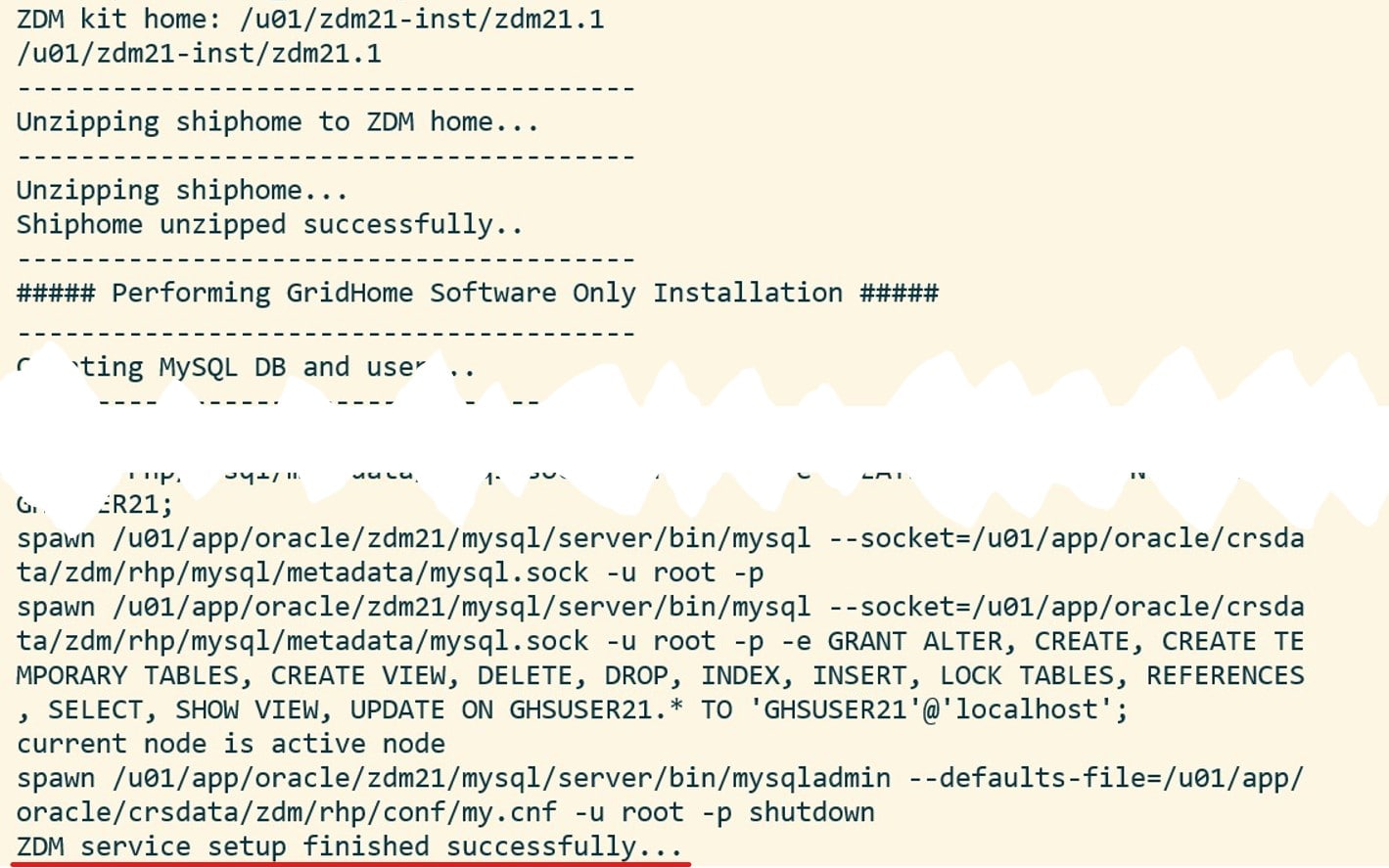

Once downloaded, start the installation:

[zdmuser@zdm]$ ./zdminstall.sh setup \

oraclehome=$ZDM_HOME \

oraclebase=$ZDM_BASE \

ziploc=./zdm_home.zip -zdm

And it should look something similar to this:

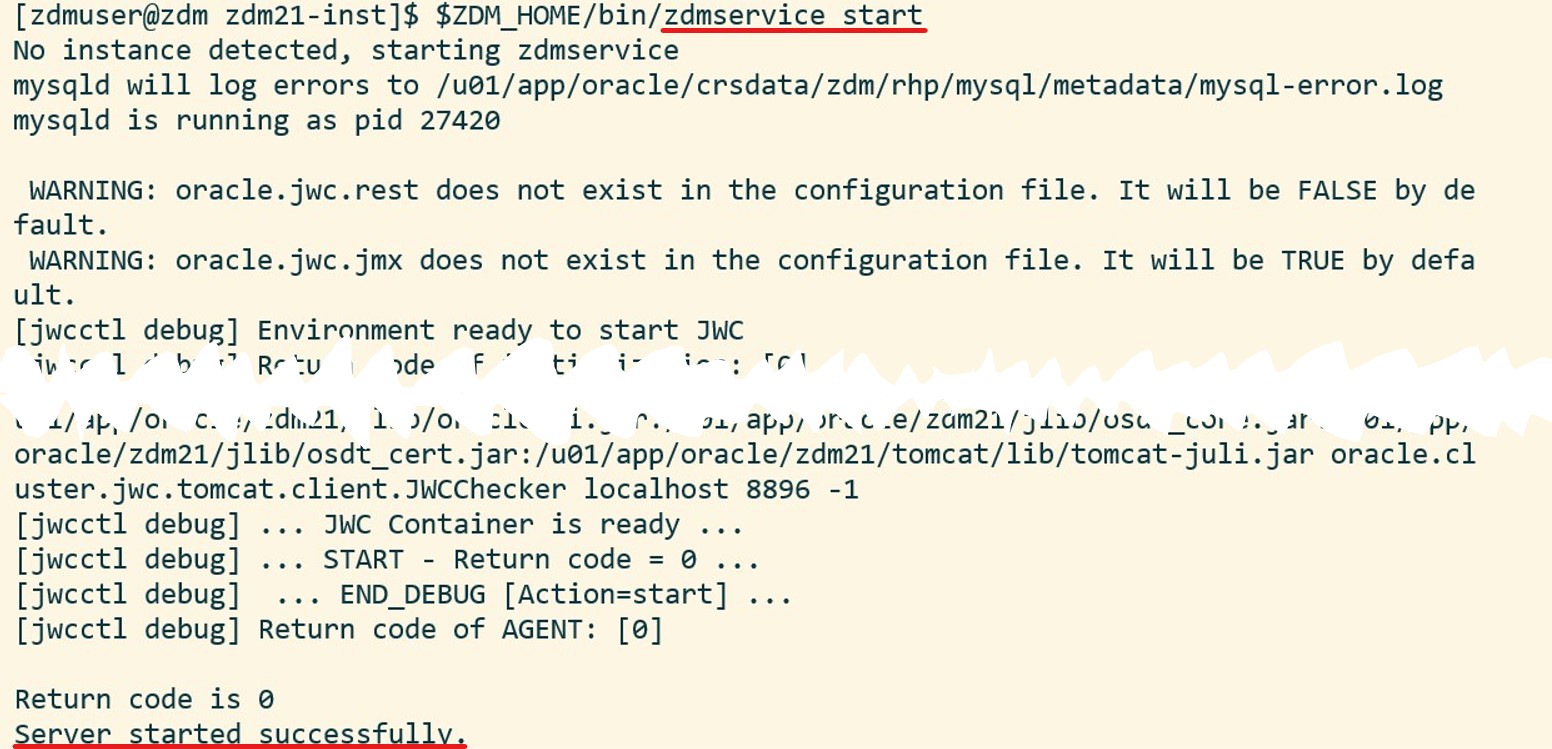

Start the ZDM service:

[zdmuser@zdm]$ $ZDM_HOME/bin/zdmservice start

Which should produce something like this:

And, optionally, I can verify the status of the ZDM service:

And, optionally, I can verify the status of the ZDM service:

[zdmuser@zdm]$ $ZDM_HOME/bin/zdmservice status

Install OCI CLI

You might need OCI CLI as part of the migration. It is simple to install, so I always do it:

bash -c "$(curl -L https://raw.githubusercontent.com/oracle/oci-cli/master/scripts/install/install.sh)"

oci setup config

You find further instructions here.

Configure Network Connectivity

The ZDM service host must communicate with the source and target hosts via SSH. For that purpose I need private key files to each of the hosts. The private key files must be without a passphrase, in RSA/PEM format and I have to put them at /home/zdmuser/.ssh/[host name]. In my demo, the files are to be named:

- /home/zdmuser/.ssh/srchost

- /home/zdmuser/.ssh/tgthost

Ensure that only zdmuser can read them:

[zdmuser@zdm]$ chmod 400 /home/zdmuser/.ssh/srchost

[zdmuser@zdm]$ chmod 400 /home/zdmuser/.ssh/tgthost

Now, I will verify the connection. In my example I will connect to opc on both database hosts, but you can change it if you like:

[zdmuser@zdm]$ ssh -i /home/zdmuser/.ssh/srchost opc@srchost

[zdmuser@zdm]$ ssh -i /home/zdmuser/.ssh/tgthost opc@tgthost

If you get an error when connecting ensure the following:

- The public key is added to /home/opc/.ssh/authorized_keys on both database hosts (change opc if you are connecting as another user)

- The key files are in RSA/PEM format (the private key file should start with

-----BEGIN RSA PRIVATE KEY-----) - The key files are without a passphrase

That’s It

Now, I have a working ZDM service host. I am ready to start the migration process.

It is probably also a good idea to find a way to start the ZDM service automatically, if the server restarts.

There is also a community marketplace image that comes with ZDM already installed. You can read about it here; evaluate it and see if it is something for you.

Other Blog Posts in This Series

- Introduction

- Install And Configure ZDM

- Physical Online Migration

- Physical Online Migration to DBCS

- Physical Online Migration to ExaCS

- Physical Online Migration and Testing

- Physical Online Migration of Very Large Databases

- Logical Online Migration

- Logical Online Migration to DBCS

- Logical Offline Migration to Autonomous Database

- Logical Online Migration and Testing

- Logical Online Migration of Very Large Databases

- Logical Online and Sequences

- Logical Offline Migration How To Minimize Downtime

- Logical Migration and Statistics

- Logical Migration and the Final Touches

- Create GoldenGate Hub

- Monitor GoldenGate Replication

- The Pro Tips

I have the following error and failed to start server. Can anyone help me please?

[zdmuser@bastiondev-852606 zdm19-inst]$ $ZDM_HOME/bin/zdmservice start

No instance detected, starting zdmservice

PRCT-1011 : Failed to run “orabase”. Detailed error:

[jwcctl debug] Environment ready to start JWC

[jwcctl debug] Return code of initialization: [0]

[jwcctl debug] … BEGIN_DEBUG [Action= start] …

Start JWC

[jwcctl debug] Loading configuration file: /u01/app/oracle/crsdata/bastiondev-852606/rhp/conf/jwc.properties

[jwcctl debug] oracle.jmx.login.credstore = CRSCRED

[jwcctl debug] oracle.jmx.login.args = DOMAIN=rhp

[jwcctl debug] oracle.rmi.url = service:jmx:rmi://{0}:{1,number,#}/jndi/rmi://{0}:{1,number,#}/jmxrmi

[jwcctl debug] oracle.http.url = http://{0}:{1,number,#}/rhp/gridhome

[jwcctl debug] oracle.jwc.tls.clientauth = false

[jwcctl debug] oracle.jwc.tls.rmi.clientfactory = RELOADABLE

[jwcctl debug] oracle.jwc.lifecycle.start.log.fileName = JWCStartEvent.log

[jwcctl debug] Get JWC PIDs

[jwcctl debug] Done Getting JWC PIDs

[jwcctl debug] … JWC containers not found …

[jwcctl debug] Start command:-server -Xms128M -Xmx384M -Djava.awt.headless=true -Ddisable.checkForUpdate=tr ue -Djava.util.logging.config.file=/u01/app/oracle/crsdata/bastiondev-852606/rhp/conf/logging.properties -Djava .util.logging.manager=org.apache.juli.ClassLoaderLogManager -DTRACING.ENABLED=true -DTRACING.LEVEL=2 -Doracle.w lm.dbwlmlogger.logging.level=FINEST -Duse_scan_IP=true -Djava.rmi.server.hostname=bastiondev-852606 -Doracle.ht tp.port=8896 -Doracle.jmx.port=8895 -Doracle.tls.enabled=false -Doracle.jwc.tls.http.enabled=false -Doracle.rhp .storagebase=/u01/app/oracle -Djava.security.egd=file:/dev/urandom -Doracle.jwc.wallet.path=/u01/app/oracle/crs data/bastiondev-852606/security -Doracle.jmx.login.credstore=WALLET -Dcatalina.home=/u01/app/oracle/zdm19/tomca t -Dcatalina.base=/u01/app/oracle/crsdata/bastiondev-852606/rhp -Djava.io.tmpdir=/u01/app/oracle/crsdata/bastio ndev-852606/rhp/temp -Doracle.home=/u01/app/oracle/zdm19 -Doracle.jwc.mode=STANDALONE -classpath /u01/app/oracl e/zdm19/jlib/cryptoj.jar:/u01/app/oracle/zdm19/jlib/oraclepki.jar:/u01/app/oracle/zdm19/jlib/osdt_core.jar:/u01 /app/oracle/zdm19/jlib/osdt_cert.jar:/u01/app/oracle/zdm19/tomcat/lib/tomcat-juli.jar:/u01/app/oracle/zdm19/tom cat/lib/bootstrap.jar:/u01/app/oracle/zdm19/jlib/jwc-logging.jar org.apache.catalina.startup.Bootstrap start

[jwcctl debug] Get JWC PIDs

[jwcctl debug] Done Getting JWC PIDs

[jwcctl debug] … JWC Container (pid=21971) …

[jwcctl debug] … JWC Container running (pid=21971) …

[jwcctl debug] Check command:-Djava.net.preferIPv6Addresses=true -Dcatalina.base=/u01/app/oracle/crsdata/ba stiondev-852606/rhp -Doracle.wlm.dbwlmlogger.logging.level=FINEST -Doracle.jwc.client.logger.file.name=/u01/app /oracle/crsdata/bastiondev-852606/rhp/logs/jwc_checker_stdout_err_%g.log -Doracle.jwc.client.logger.file.number =10 -Doracle.jwc.client.logger.file.size=1048576 -Doracle.jwc.wallet.path=/u01/app/oracle/crsdata/bastiondev-85 2606/security -Doracle.jmx.login.credstore=WALLET -Doracle.tls.enabled=false -Doracle.jwc.tls.http.enabled=fals e -classpath /u01/app/oracle/zdm19/jlib/jwc-logging.jar:/u01/app/oracle/zdm19/jlib/jwc-security.jar:/u01/app/or acle/zdm19/jlib/jwc-client.jar:/u01/app/oracle/zdm19/jlib/jwc-cred.jar:/u01/app/oracle/zdm19/jlib/srvm.jar:/u01 /app/oracle/zdm19/jlib/srvmhas.jar:/u01/app/oracle/zdm19/jlib/cryptoj.jar:/u01/app/oracle/zdm19/jlib/oraclepki. jar:/u01/app/oracle/zdm19/jlib/osdt_core.jar:/u01/app/oracle/zdm19/jlib/osdt_cert.jar:/u01/app/oracle/zdm19/tom cat/lib/tomcat-juli.jar oracle.cluster.jwc.tomcat.client.JWCChecker localhost 8896 -1

CRS_ERROR:TCC-0004: The container was not able to start.

CRS_ERROR:One or more listeners failed to start. Full details will be found in the appropriate container log fi leContext [/rhp] startup failed due to previous errors

sync_start failed with exit code 1.

[jwcctl debug] … JWC Container is not ready …

[jwcctl debug] … START – Return code = 1 …

[jwcctl debug] … END_DEBUG [Action=start] …

[jwcctl debug] Return code of AGENT: [1]

Return code is 1

Start operation could not start zdmservice.

Cleaning current attempt…

[jwcctl debug] Environment ready to start JWC

[jwcctl debug] Return code of initialization: [0]

[jwcctl debug] … BEGIN_DEBUG [Action= clean] …

Clean JWC

[jwcctl debug] Get JWC checker PIDs

[jwcctl debug] Done getting JWC checker PIDs

[jwcctl debug] Get JWC shutter PIDs

[jwcctl debug] Done getting JWC shutter PIDs

[jwcctl debug] Get JWC PIDs

[jwcctl debug] Done Getting JWC PIDs

[jwcctl debug] … Cleaned JWC Container (pid=21971) …

[jwcctl debug] Get JWC checker PIDs

[jwcctl debug] Done getting JWC checker PIDs

[jwcctl debug] Get JWC shutter PIDs

[jwcctl debug] Done getting JWC shutter PIDs

[jwcctl debug] Get JWC PIDs

[jwcctl debug] Done Getting JWC PIDs

[jwcctl debug] … CLEAN – Return code = 0 …

[jwcctl debug] … END_DEBUG [Action=clean] …

[jwcctl debug] Return code of AGENT: [0]

Return code is 0

zdmservice start failed…

LikeLike

Hi,

Please try to have a look in this log file:

$ORACLE_BASE/crsdata//rhpserver.log

It should contain more details. The only thing I could find on this error message is mentioned in the following MOS note:

RHP command fails with CRS-2674: Start of ‘ora.rhpclient’ on ‘hostname’ failed TCC-0004: The container was not able to start. (Doc ID 2582512.1)

Regards,

Daniel

LikeLike

Thank you for the reply. I got the following error when I install the zdm_home.zip.

—————————————

##### Starting GridHome Software Only Installation #####

—————————————

Launching Oracle Grid Infrastructure Setup Wizard…

[WARNING] [INS-42505] The installer has detected that the Oracle Grid Infrastructure home software at (/u01/app/oracle/zdm19) is not complete.

CAUSE: Following files are missing:

[/u01/app/oracle/zdm19/jlib/jackson-annotations-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jackson-core-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jackson-databind-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jackson-jaxrs-base-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jackson-jaxrs-json-provider-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jacks on-module-jaxb-annotations-2.9.5.jar, /u01/app/oracle/zdm19/jlib/jersey-client-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-common-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-container-servlet-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-container-servlet-core-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-entity-filtering- 2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-guava-2.24.jar, /u01/app/oracle/zdm1 9/jlib/jersey-media-jaxb-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-media-json-jackson-2.24.jar, /u01/app/oracle/zdm19/jlib/jersey-server-2.24.jar]

ACTION: Ensure that the Oracle Grid Infrastructure home at (/u01/app/oracle/zdm19) includes the files listed above.

[WARNING] [INS-41813] OSDBA for ASM, OSOPER for ASM, and OSASM are the same OS group.

CAUSE: The group you selected for granting the OSDBA for ASM group for database access, and the OSOPER for ASM group for startup and shutdown of Oracle ASM, is the same group as the OSASM group, whose members have SYSASM privileges on Oracle ASM.

ACTION: Choose different groups as the OSASM, OSDBA for ASM, and OSOPER for ASM groups.

[WARNING] [INS-41875] Oracle ASM Administrator (OSASM) Group specified is same as the users primary group.

CAUSE: Operating system group zdm specified for OSASM Group is same as the users primary group.

ACTION: It is not recommended to have OSASM group same as primary group of user as it becomes the inventory group. Select any of the group other than the primary group to avoid misconfiguration.

[WARNING] [INS-32022] Grid infrastructure software for a cluster installation must not be under an Oracle base directory.

CAUSE: Grid infrastructure for a cluster installation assigns root ownership to all parent directories of the Grid home location. As a result, ownership of all named directories in the software location path is changed to root, creating permissions errors for all subsequent installations into the same Oracle base.

ACTION: Specify software location outside of an Oracle base directory for grid infrastructure for a cluster installation.

[WARNING] [INS-32055] The Central Inventory is located in the Oracle base.

ACTION: Oracle recommends placing this Central Inventory in a location outside the Oracle base directory.

[WARNING] [INS-13014] Target environment does not meet some optional requirements.

CAUSE: Some of the optional prerequisites are not met. See logs for details. gridSetupActions2020-08-11_03-21-28AM.log

ACTION: Identify the list of failed prerequisite checks from the log: gridSetupActions2020-08-11_03-21-28AM.log. Then either from the log file or from installation manual find the appropriate configuration to meet the prerequisites and fix it manually.

The response file for this session can be found at:

/u01/app/oracle/zdm19/install/response/grid_2020-08-11_03-21-28AM.rsp

LikeLike

Hi,

Those warnings are expected during the installation. They are not the cause of the problem.

Unless you can get any clues from the MOS note I mentioned earlier, you have to open a service request at support.

Regards,

Daniel

LikeLike

I find it helpful that this blog post breaks down each step of setting up a Zero Downtime Migration Service host.

LikeLike

Hi,

Thanks for the positive feedback. Much appreciated.

Regards,

Daniel

LikeLike