This blog post is written based on ZDM version 21. The latest version of ZDM has significant changes. Please consult the documentation for updates.

Following the blog post on migrating Very Large Databases (VLDBs) using Logical Online method, let’s touch upon the Physical Online method as well.

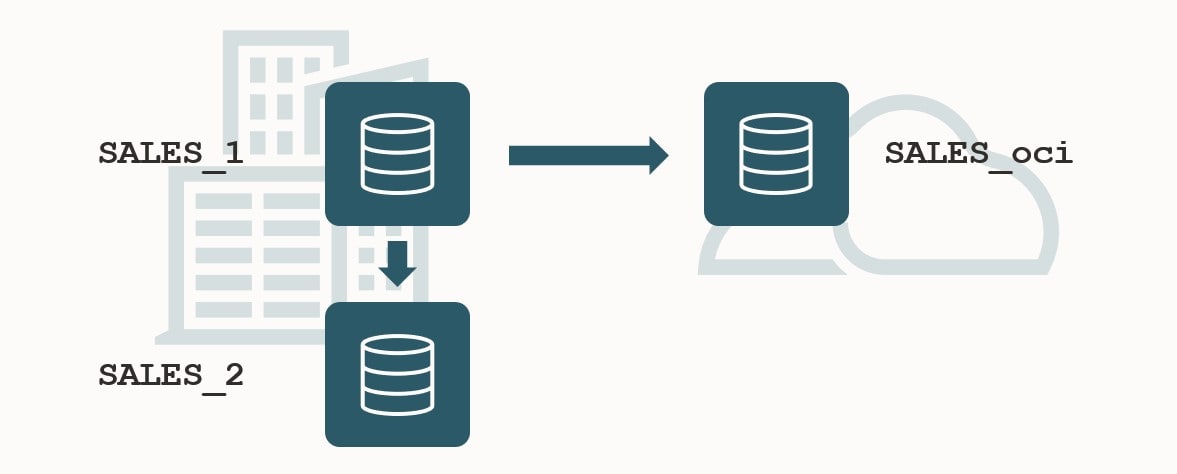

Existing Data Guard

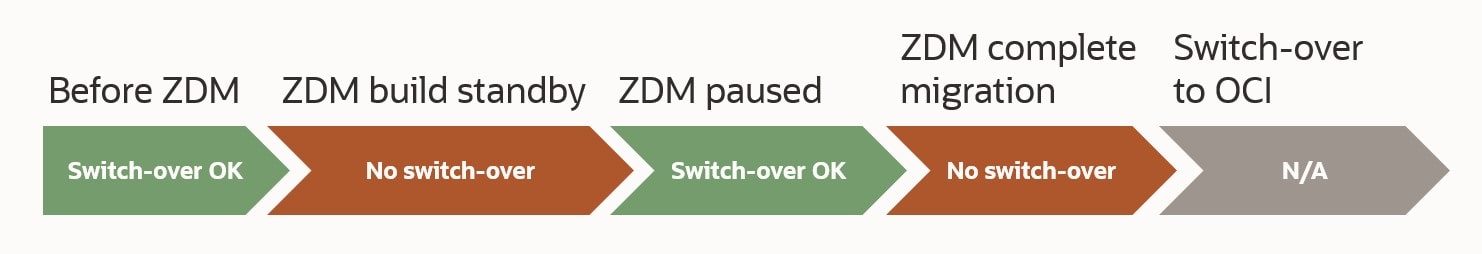

VLDBs are very often protected by Data Guard with one or more standby databases. When you start a migration with Zero Downtime Migration (ZDM) you don’t want to promise your existing Data Guard setup. If something happens during the preparation of the migration to OCI, you still want to be able to switch over to an on-prem standby database. Something like this:

I recommend that you always configure Data Guard using Data Guard broker. ZDM supports using the broker for the migration. If you don’t have Data Guard broker configured, ZDM can use a manual configuration as well.

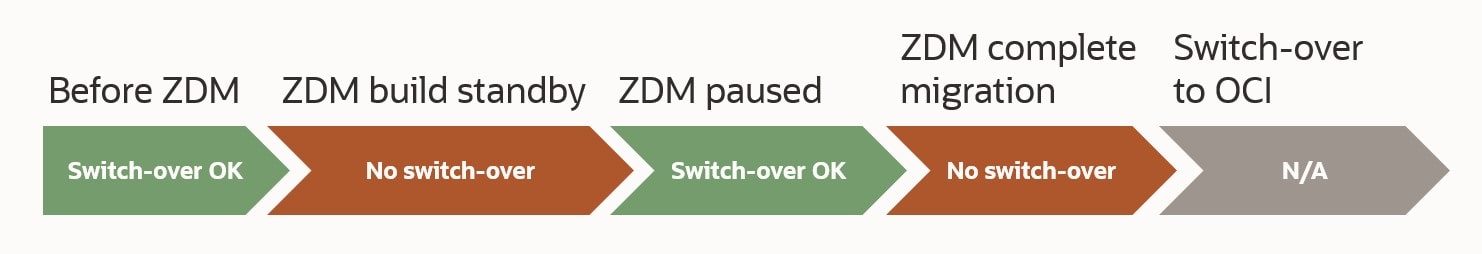

A few things to observe:

- When ZDM is working, no switch-overs are allowed. This will cause the process to error out.

- By working I mean – from the second you start the ZDM migration and until it is paused at ZDM_CONFIGURE_DG_SRC.

- When ZDM is paused, you can do as many switch-overs as you like. Just ensure that the original source database become the primary database again as soon as possible.

- When you need to complete the migration, the source database must be the primary database and no switch-overs are allowed. Which does make sense because in this last phase, ZDM is switching over to the OCI target database.

- What about fail-overs. Fail-overs means loss of data and to accept that you need to open the database with RESETLOGS. This causes all sort of havoc in your Data Guard setup. You are back to start.

The Backup

ZDM needs a full backup that can be restored on your target.

- DBCS: If your target database is one of the OCI Cloud Services (Virtual Machine, Bare Metal or Exadata DB System), ZDM will need to take a new full backup. Existing backups can’t be used.

- ExaCC or Exadata on-prem: You can either take a new full backup or use an existing backup that is made available on disk. In addition, if you are so fortunate to have a Zero Data Loss Recovery Appliance (ZDLRA), you don’t need to take a backup. ZDM can just restore directly from ZDLRA.

If you are targeting a DBCS your DATA_TRANSFER_MEDIUM is set to OSS (Object Storage Service). The backup is stored in Object Storage using Oracle Database Cloud Backup Module for OCI. The backup in the source database and the restore in the target database will happen via a special sbt channel which streams the backup directly to and from Object Storage. This means:

- The backup never hits the disk, so you don’t need additional disk space to hold the backup.

- The duration of the backup is depending on your network speed to OCI. Since the backup is streamed directly to OCI, the network can become a bottleneck. If you have a slow connection to OCI, the backup will run equally slow. The same applies about the restore, however, the target database is already in OCI and does have a good connection to Object Storage.

ZDM will by default use 10 RMAN channels for the restore and the backup. With your knowledge of the source database, you might know better. You can tweak the number of channels in the response file. Look for the parameters SRC_RMAN_CHANNELS and TGT_RMAN_CHANNELS.

Based on your knowledge or testing you can determine which RMAN Compression algorithm that gives the best benefit on your database. You can adjust the compression algorithm in the response file using the parameter ZDM_RMAN_COMPRESSION_ALGORITHM. The default is MEDIUM which is normally gives the best balance between compression ratio and CPU time. And remember, RMAN Compression normally require a license for Advanced Compression Option but when you migrate with ZDM, there is a restricted use license.

While ZDM is taking a backup of the source database, no other backups should be running. Be sure to put your regular backups, including archive backups, on hold.

Redo Apply

In the source database, you should keep archive logs on disk until the target database has been restored, Data Guard has been configured, and the target database has caught up with redo apply.

If you have a slow network connection and a huge database, it can take days until the backup has completed, restored has completed and redo apply has caught up.

- Imagine you start the backup at sequence 100.

- The restore of the target database finishes two days later. The source database is now at sequence 200.

- ZDM configure Data Guard and starts redo transfer and redo apply. The source database is now at sequence 220.

- Sequences 100-220 must be available on disk on the source database host, so the source (primary) database can transfer them to the target (standby) database.

It is not uncommon for VLDBs to generate redo on a daily basis that are double-digit TB. Just the other day I talked to a customer whose database generated 15 TB of archive logs a day.

First, you must be able to transfer the redo from the source database (primary) to the target database (standby). This is simple math: If you have 15 TB redo a day, you should be able to transfer that using a 1,5 Gbps connection (amount of redo / 24 / 60 / 60 * 8).

If transferring redo becomes a problem, you can look into using redo transport compression. This can reduce the amount of data that must be transferred at the cost of CPU cycles. You can read more about it in the MOS note Redo Transport Compression in a Data Guard Environment (Doc ID 729551.1).

I learned from colleagues in the Maximum Availability Architecture (MAA) team that TDE Tablespace Encryption and redo transport compression doesn’t play very well together. If your source database is encrypted, you should not expect that much benefit from redo transport compression.

Next, redo must be applied on the target database. Is the target database capable of applying redo so fast? On Exadata the answer is most likely: YES – but as always in IT, it depends.

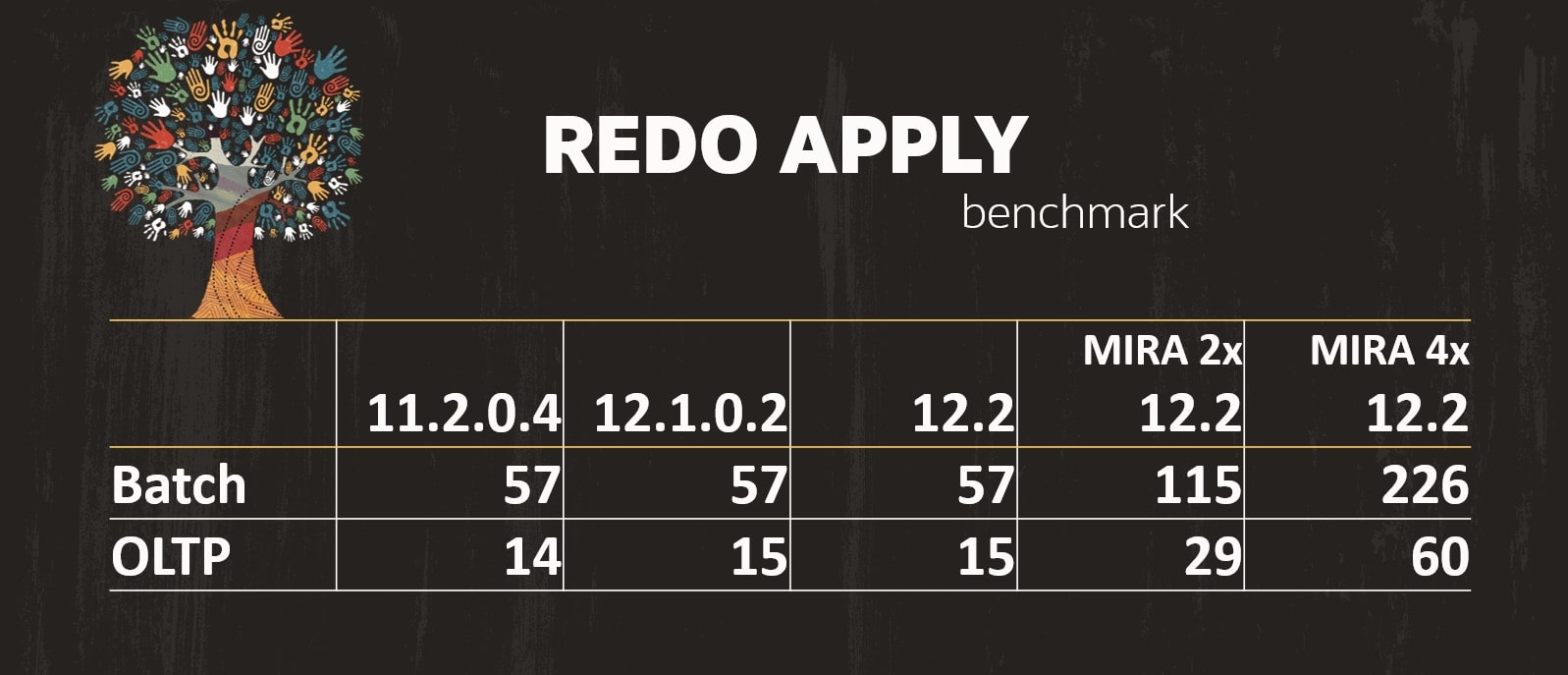

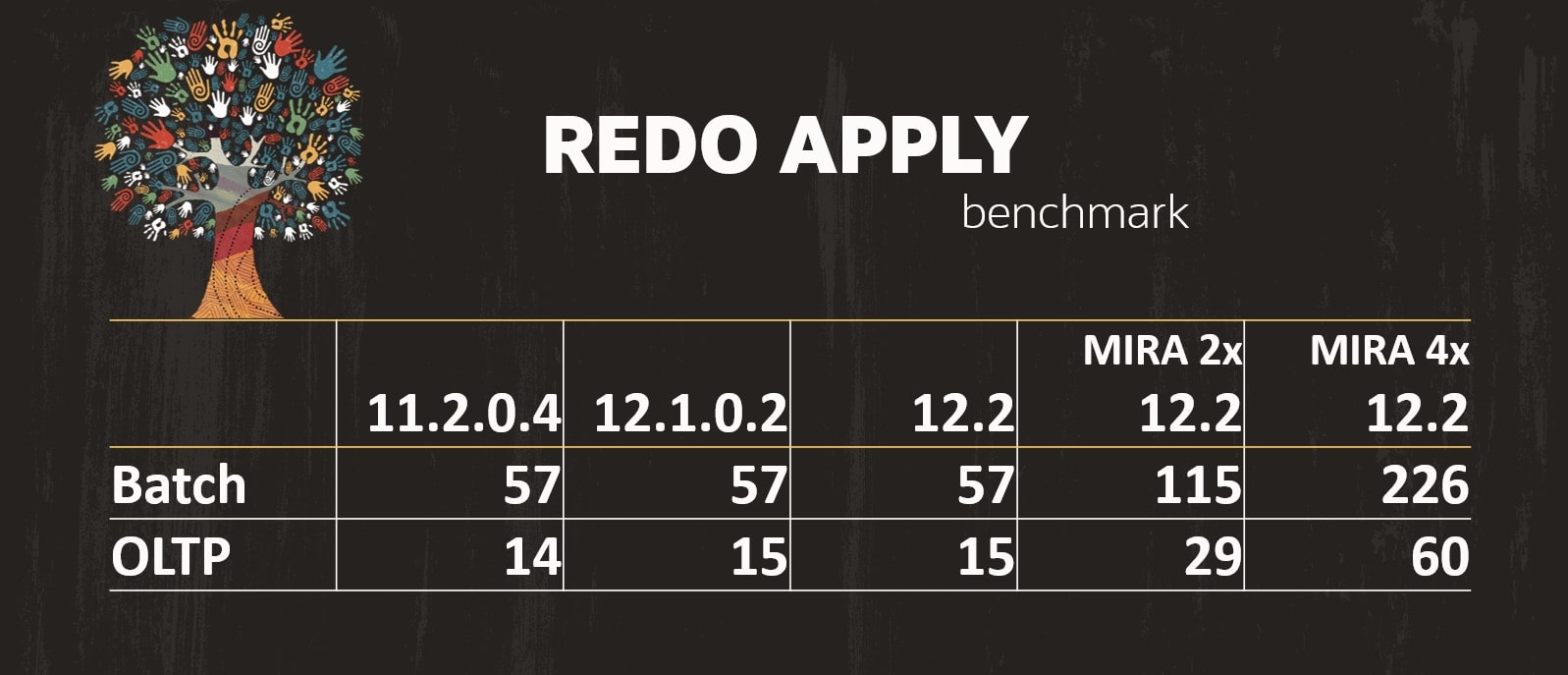

The numbers of the above graph comes from Redo Apply Best Practices – Oracle Data Guard and Active Data Guard. Based on your database release and the type of workload you have in the database; you can see the amount of redo that can be applied daily (in TB). The last two columns are using Multi-Instance Redo Apply (MIRA) with either two or four active RAC nodes. The numbers were generated on an Exadata.

The numbers of the above graph comes from Redo Apply Best Practices – Oracle Data Guard and Active Data Guard. Based on your database release and the type of workload you have in the database; you can see the amount of redo that can be applied daily (in TB). The last two columns are using Multi-Instance Redo Apply (MIRA) with either two or four active RAC nodes. The numbers were generated on an Exadata.

Backup of Target Database

How do you backup your target database in OCI? You want to have a valid backup from the very second that you switch over to the target database.

The target placeholder database that you originally created will be overwritten by ZDM. This means that you can’t configure and enable automatic backup in OCI in advance. You must wait until the migration has completed until you enable automatic backup.

You could:

- Extend the downtime window to allow automatic backup to be configured after the migration. Also, allow enough time for the first backup to complete.

- Or, do your own backup in OCI. This is more cumbersome but will allow you to open the database for business immediately after the switchover. But you are in charge of the backup now. All the various bits and pieces are available:

- Original backup is still in object storage.

- Archive logs are on disk – you can back them up manually.

- Perform incrementals if needed – put them somewhere safe.

- In case of emergency – glue it all together

Data Guard on Target Database

Similar to automatic backup, you can’t create a Data Guard Association until ZDM has completed the migration. The cloud tooling does not support creating the standby database through a cascading standby.

This means that you can’t build your OCI standby database until after ZDM has completed the migration – and the OCI database is the primary database. You can start to create the OCI Data Guard as soon as ZDM is done, but

- You must tolerate that the OCI Data Guard is missing

- Or, take downtime

Conclusion

Even huge Oracle Databases can be migrated to OCI using Zero Downtime Migration. You might need to make a few adjustments from the standard flow, but it is absolutely doable. Automatic backup and data guard can’t be created in OCI until after the migration. This might force you to take downtime.

Besides the actual migration, you should also do your best to ensure performance stability once the database is open for business. For that purpose, you should have a look at our webinar Performance Stability, Tips and Tricks and Underscores.

Other Blog Posts in This Series

The numbers of the above graph comes from

The numbers of the above graph comes from