A customer reached out to me:

I want upgrade a PDB from Oracle Database 19c to 23ai. It’s in a Base Database Service in OCI. I use the Remote clone feature in the OCI console but it fails with DCS-12300 because IMEDIA component is installed.

The task:

- Clone a PDB using the OCI Console Remote clone feature

- From a CDB on Oracle Database 19c to another CDB on Oracle Database 23ai

- Upgrade the PDB to Oracle Database 23ai

Let’s see what happens when you clone a PDB:

It fails, as explained by the customer.

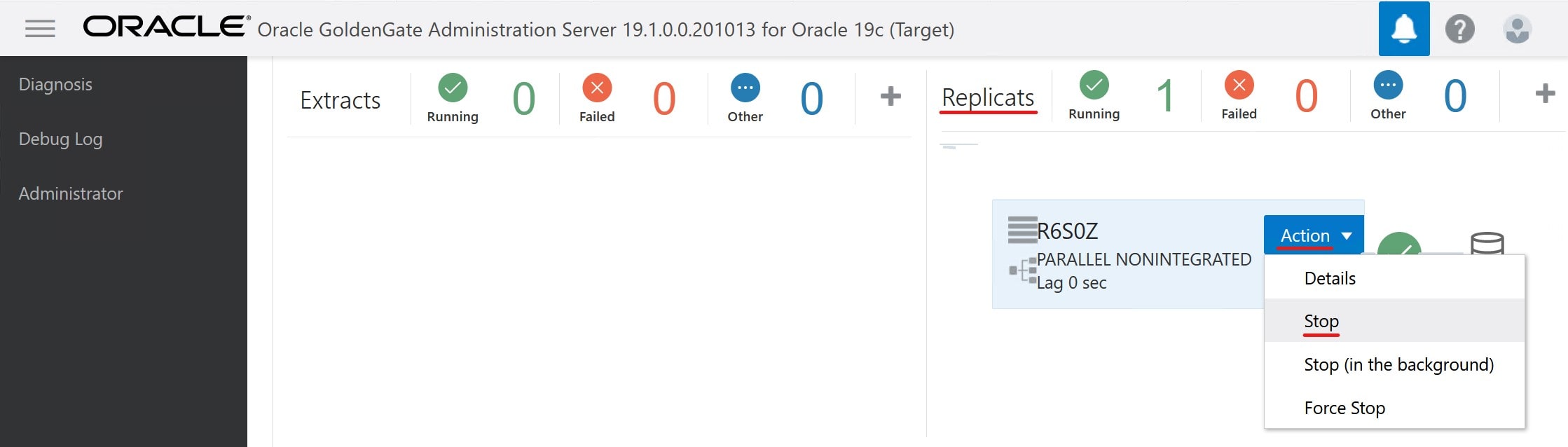

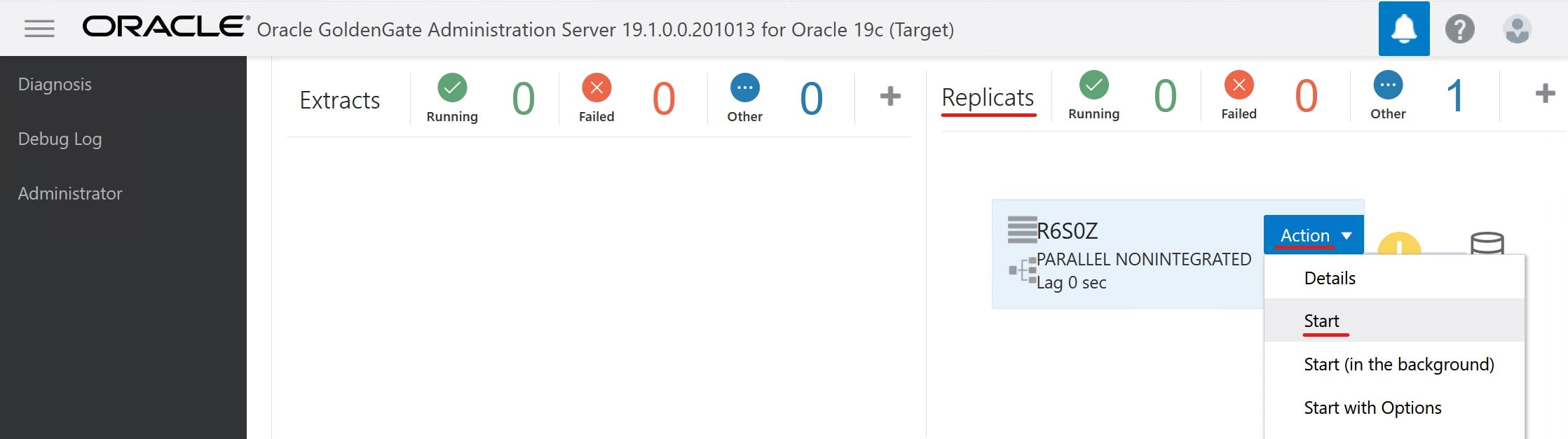

Let’s dig a little deeper. Connect as root to the target system and check the DCS agent.

$ dbcli list-jobs

ID Description Created Status

---------------------------------------- --------------------------------------------------------------------------- ----------------------------------- ----------

...

6e1fa60c-8572-4e08-ba30-cafb705c195e Remote Pluggable Database:SALES from SALES in db:CDB23 Tuesday, September 24, 2024, 05:04:13 UTC Failure

$ dbcli describe-job -i 6e1fa60c-8572-4e08-ba30-cafb705c195e

Job details

----------------------------------------------------------------

ID: 6e1fa60c-8572-4e08-ba30-cafb705c195e

Description: Remote Pluggable Database:SALES from SALES in db:CDB23

Status: Failure

Created: September 24, 2024 at 5:04:13 AM UTC

Progress: 35%

Message: DCS-12300:Failed to clone PDB SALES from remote PDB SALES. [[FATAL] [DBT-19407] Database option (IMEDIA) is not installed in Local CDB (CDB23).,

CAUSE: The database options installed on the Remote CDB(CDB19_979_fra.sub02121342350.daniel.oraclevcn.com) m

Error Code: DCS-12300

Cause: Error occurred during cloning the remote PDB.

Action: Refer to DCS agent log, DBCA log for more information.

...

What’s Going on?

First, IMEDIA stands for interMedia and is an old name for the Multimedia component. The ID of Multimedia is ORDIM.

Oracle desupported the Multimedia component:

Desupport of Oracle Multimedia Oracle Multimedia is desupported in Oracle Database 19c, and the implementation is removed. … Oracle Multimedia objects and packages remain in the database. However, these objects and packages no longer function, and raise exceptions if there is an attempt made to use them.

In the customer’s and my case, the Multimedia component is installed in the source PDB, but not present in the target CDB. The target CDB is on Oracle Database 23ai where this component is completely removed.

If you plug in a PDB that has more components than the CDB, you get a plug-in violation, and that’s causing the error.

Here’s how you can check whether Multimedia is installed:

select con_id, status

from cdb_registry

where comp_id='ORDIM'

order by 1;

Solution 1: AutoUpgrade

The best solution is to use AutoUpgrade. Here’s a blog post with all the details.

AutoUpgrade detects that multimedia is already present in the preupgrade phase. Here’s an extract from the preupgrade log file:

INFORMATION ONLY

================

7. Follow the instructions in the Oracle Multimedia README.txt file in <23

ORACLE_HOME>/ord/im/admin/README.txt, or MOS note 2555923.1 to determine

if Oracle Multimedia is being used. If Oracle Multimedia is being used,

refer to MOS note 2347372.1 for suggestions on replacing Oracle

Multimedia.

Oracle Multimedia component (ORDIM) is installed.

Starting in release 19c, Oracle Multimedia is desupported. Object types

still exist, but methods and procedures will raise an exception. Refer to

23 Oracle Database Upgrade Guide, the Oracle Multimedia README.txt file

in <23 ORACLE_HOME>/ord/im/admin/README.txt, or MOS note 2555923.1 for

more information.

When AutoUpgrade plugs in the PDB with Multimedia, it’ll see the plug-in violation. But AutoUpgrade is smart and knows that Multimedia is special. It knows that during the upgrade, it will execute the Multimedia removal script. So, it disregards the plug-in violation until the situation is resolved.

AutoUpgrade also handles the upgrade, so it’s a done deal. Easy!

Solution 2: Remove Multimedia

You can also manually remove the Multimedia component in the source PDB before cloning.

I grabbed these instructions from Mike Dietrich’s blog. They work for a 19c CDB:

cd $ORACLE_HOME/rdbms/admin

#First, remove ORDIM in all containers, except root

$ORACLE_HOME/perl/bin/perl catcon.pl -n 1 -C 'CDB$ROOT' -e -b imremdo_pdbs -d $ORACLE_HOME/ord/im/admin imremdo.sql

#Recompile

$ORACLE_HOME/perl/bin/perl catcon.pl -n 1 -e -b utlrp -d '''.''' utlrp.sql

#Last, remove ORDIM in root

$ORACLE_HOME/perl/bin/perl catcon.pl -n 1 -c 'CDB$ROOT' -e -b imremdo_cdb -d $ORACLE_HOME/ord/im/admin imremdo.sql

#Recompile

$ORACLE_HOME/perl/bin/perl catcon.pl -n 1 -e -b utlrp -d '''.''' utlrp.sql

#Remove leftover package in all containers

echo "drop package SYS.ORDIMDPCALLOUTS;" > vi dropim.sql

$ $ORACLE_HOME/perl/bin/perl $ORACLE_HOME/rdbms/admin/catcon.pl -n 1 -e -b dropim -d '''.''' dropim.sql

Without the Multimedia component cloning via the cloud tooling works, but you are still left with a PDB that you attend to.

If you’re not using AutoUpgrade, you will use a new feature called replay upgrade. The CDB will see that the PDB is a lower-version and start an automatic upgrade. However, you still have some manual pre- and post-upgrade tasks to do.

One of the reasons I prefer using AutoUpgrade.

Further Reading

For those interested, here are a few links to Mike Dietrich’s blog on components and Multimedia in particular:

The numbers of the above graph comes from

The numbers of the above graph comes from