Let me show you how to upgrade a single PDB to Oracle AI Database 26ai.

This is called an unplug-plug upgrade and is much faster than a full CDB upgrade.

But what about Data Guard? I use deferred recovery, meaning I must restore the PDB after the upgrade.

Let’s see how it works.

1. Upgrade On Primary

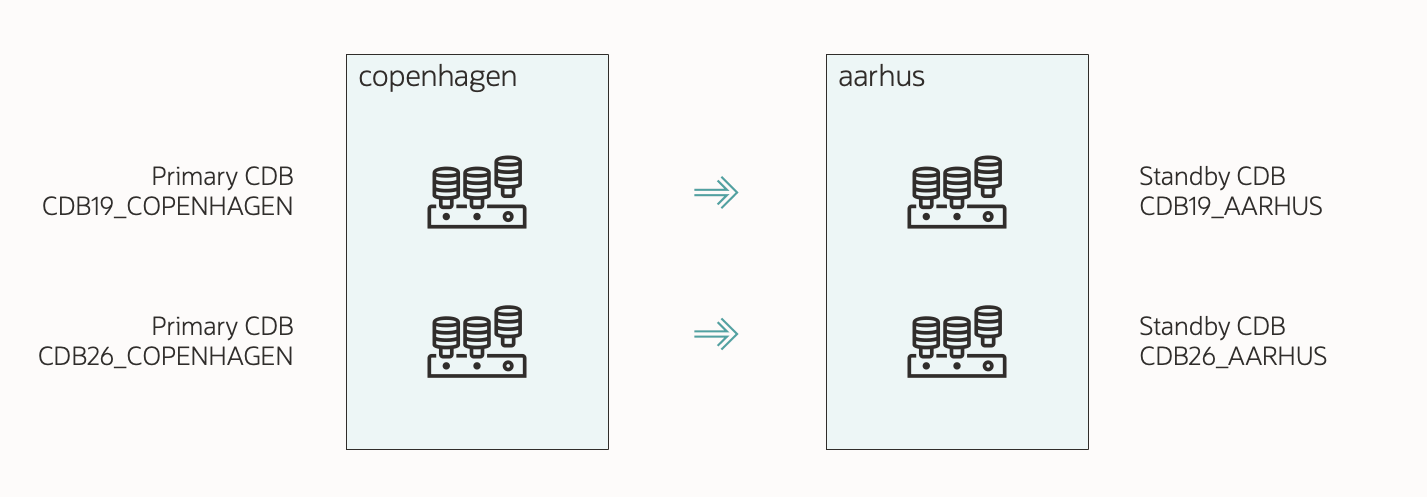

I’ve already prepared my database and installed a new Oracle home. I’ve also created a new container database or decided to use an existing one. The new container database is configured for Data Guard.

The maintenance window has started, and users have left the database.

-

This is my AutoUpgrade config file:

global.global_log_dir=/home/oracle/autoupgrade/logs/SALES-CDB26

upg1.source_home=/u01/app/oracle/product/19

upg1.target_home=/u01/app/oracle/product/26

upg1.sid=CDB19

upg1.pdbs=SALES

upg1.target_cdb=CDB26

upg1.target_pdb_copy_option.SALES=file_name_convert=none

- I specify the source and target Oracle homes. I’ve already installed the target Oracle home.

sid contains the SID of my current CDB.target_cdb specifies the SID of the container where I plug into.pdbs is the PDB that I want to upgrade. I can specify additional PDBs in a comma-separated list.- I want to reuse the existing data files, so I omit

target_pdb_copy_option.

- To plug in with deferred recovery, I can either omit

manage_standbys_clause or set manage_standbys_clause=none.

- Check the appendix for additional parameters.

-

I start AutoUpgrade in deploy mode:

java -jar autoupgrade.jar -config SALES-CDB26.cfg -mode deploy

- AutoUpgrade starts by analyzing the database for upgrade readiness and executes the pre-upgrade fixups.

- Next, it creates a manifest file and unplugs from source CDB.

- Then, it plugs into the target CDB with deferred recovery. At this point, the standby is not protecting the new PDB.

- Finally, it upgrades the PDB.

-

While the job progresses, I monitor it:

upg> lsj -a 30

- The

-a 30 option automatically refreshes the information every 30 seconds.

- I can also use

status -job 100 -a 30 to get detailed information about a specific job.

-

In the end, AutoUpgrade completes the upgrade:

Job 100 completed

------------------- Final Summary --------------------

Number of databases [ 1 ]

Jobs finished [1]

Jobs failed [0]

Jobs restored [0]

Jobs pending [0]

The following PDB(s) were created with standbys=none option. Refer to the postcheck result CDB19_COPENHAGEN_postupgrade.log for more details on manual actions needed.

SALES

Please check the summary report at:

/home/oracle/autoupgrade/logs/SALES-CDB26/cfgtoollogs/upgrade/auto/status/status.html

/home/oracle/autoupgrade/logs/SALES-CDB26/cfgtoollogs/upgrade/auto/status/status.log

- This includes the post-upgrade checks and fixups.

- AutoUpgrade informs me that it plugged in with deferred recovery. To protect the PDB on the standby, I must restore it.

-

I review the Autoupgrade Summary Report. The path is printed to the console:

vi /home/oracle/autoupgrade/logs/SALES-CDB26/cfgtoollogs/upgrade/auto/status/status.log

-

I take care of the post-upgrade tasks.

-

AutoUpgrade drops the PDB from the source CDB.

- The data files used by the target CDB are in the original location. In the directory structure of the source CDB.

- Take care you don’t delete them by mistake; they are now used by the target CDB.

- Optionally, move them into the correct location using online datafile move.

2. Restore PDB On Standby

-

I execute all commands on the same host – the standby system.

-

On the standby, I verify that recovery status is disabled:

SQL> select open_mode, recovery_status

from v$pdbs where name='SALES';

OPEN_MODE RECOVERY_STATUS

____________ __________________

MOUNTED DISABLED

- This means that I plugged in with deferred recovery.

- The standby is not protecting this PDB.

-

Next, I connect to the standby using RMAN, and I restore the PDB:

connect target /

run{

allocate channel disk1 device type disk;

allocate channel disk2 device type disk;

set newname for pluggable database SALES to new;

restore pluggable database SALES

from service <primary_service>

section size 64G;

}

- You can add more channels depending on your hardware.

- Replace

SALES with the name of your PDB.

<primary_service> is a connect string to the primary database.

-

Next, I connect to the standby database using Data Guard broker and turn off redo apply:

edit database <stdby_unique_name> set state='apply-off';

-

Back in RMAN, still connected to the standby, I switch to the newly restored data files:

switch pluggable database SALES to copy;

-

Then, I connect to the standby and generate a list of commands that will online all the data files:

alter session set container=SALES;

select 'alter database datafile '||''''||name||''''||' online;' from v$datafile;

- Save the commands for later.

- There should be one row for each data file.

-

If my standby is an Active Data Guard, I must restart it into MOUNT mode.

alter session set container=CDB$ROOT;

shutdown immediate

startup mount

-

Now, I can re-enable recovery and online the data files:

alter session set container=SALES;

alter pluggable database enable recovery;

alter database datafile <file1> online;

alter database datafile <file2> online;

...

alter database datafile <filen> online;

- I must connect to the PDB.

- I must execute the

alter database datafile ... online command for each data file.

-

I turn on redo apply:

edit database <stdby_unique_name> set state='apply-on';

-

At this point, the standby protects my PDB.

-

After a minute or two, I check the Data Guard config:

validate database <stdby_unique_name>;

-

Once my standby is in sync, I can do a switchover as the ultimate test:

switchover to <stdby_unique_name>;

-

Now, I connect to the new primary and ensure the PDB opens in read write mode and unrestricted:

select open_mode, restricted

from v$pdbs

where name='SALES';

OPEN_MODE RESTRICTED

_____________ _____________

READ WRITE NO

-

You can find the full procedure in Making Use Deferred PDB Recovery and the STANDBYS=NONE Feature with Oracle Multitenant (KB90519). Check sections Steps for Preparing to enable recovery of the PDB and Steps required for enabling recovery on the PDB after the files have been copied.

That’s It!

With AutoUpgrade, you can easily upgrade a single PDB using an unplug-plug upgrade. The easiest way to handle the standby is to restore the pluggable database after the upgrade.

Check the other blog posts related to upgrade to Oracle AI Database 26ai.

Happy upgrading!

Appendix

Rollback Options

When you perform an unplug-plug upgrade, you can’t use Flashback Database as a rollback method. You need to rely on other methods, like:

- Copy data files by using

target_pdb_copy_option.

- RMAN backups.

- Storage snapshots.

In this blog post, I reuse the data files, so I must have an alternate rollback plan.

Copy or Re-use Data Files

When you perform an unplug-plug upgrade, you must decide what to do with the data files.

|

Copy |

Re-use |

| Plug-in Time |

Slow, needs to copy data files. |

Fast, re-uses existing data files. |

| Disk space |

Need room for a full copy of the data files. |

No extra disk space. |

| Rollback |

Source data files are left untouched. Re-open PDB in source CDB. |

PDB in source CDB unusable. Rely on other rollback method. |

| AutoUpgrade |

Enable using target_pdb_copy_option. |

Default. Omit parameter target_pdb_copy_option. |

| Location after plug-in |

The data files are copied to the desired location. |

The data files are left in the source CDB location. Don’t delete them by mistake. Consider moving them using online data files move. |

| Syntax used |

CREATE PLUGGABLE DATABASE ... COPY |

CREATE PLUGGABLE DATABASE ... NOCOPY |

target_pdb_copy_option

Use this parameter only when you want to copy the data files.

target_pdb_copy_option=file_name_convert=('/u02/oradata/SALES', '/u02/oradata/NEWSALES','search-1','replace-1')

When you have data files in a regular file system. The value is a list of pairs of search/replace strings.target_pdb_copy_option=file_name_convert=none

When you want data files in ASM or use OMF in a regular file system. The database automatically generates new file names and puts data files in the right location.target_pdb_copy_option=file_name_convert=('+DATA/SALES', '+DATA/NEWSALES')

This is a very rare configuration. Only when you have data files in ASM, but don’t use OMF. If you have ASM, I strongly recommend using OMF.

You can find further explanation in the documentation.

Move

The CREATE PLUGGABLE DATABASE statement also has a MOVE clause in addition to COPY and NOCOPY. In a regular file system, the MOVE clause works as you would expect. However, in ASM, it is implemented via copy-and-delete, so you might as well use the COPY option.

AutoUpgrade doesn’t support the MOVE clause.

Compatible

During plug-in, the PDB automatically inherits the compatible setting of the target CDB. You don’t have to raise the compatible setting manually.

Typically, the target CDB has a higher compatible and the PDB raises it on plug-in. This means you don’t have the option of downgrading.

If you want to preserve the option of downgrading, be sure to set the compatible parameter in the target CDB to the same value as the source CDB.

Pre-plugin Backups

After doing an unplug-plug upgrade, you can restore a PDB using a combination of backups from before and after the plug-in operation. Backups from before the plug-in is called pre-plugin backups.

A restore using pre-plugin backups is more complicated; however, AutoUpgrade eases that by exporting the RMAN backup metadata automatically.

I suggest that you:

- Start a backup immediately after the upgrade, so you don’t have to use pre-plugin backups.

- Practice restoring with pre-plugin backups.

What If My Database Is A RAC Database?

There are no changes to the procedure if you have an Oracle RAC database. AutoUpgrade handles it transparently. You must manually recreate services in the target CDB using srvctl.

What If I Use Oracle Restart?

No changes. You must manually recreate services in the target CDB using srvctl.

What If My Database Is Encrypted

AutoUpgrade fully supports upgrading an encrypted PDB.

You’ll need to input the source and target CDB keystore passwords into the AutoUpgrade keystore. You can find the details in a previous blog post.

In the container database, AutoUpgrade always adds the database encryption keys to the unified keystore. After the conversion, you can switch to an isolated keystore.

Before restoring the pluggable database on the standby, you must copy the keystore from the primary to the standby.

Other Config File Parameters

The config file shown above is a basic one. Let me address some of the additional parameters you can use.

-

timezone_upg: AutoUpgrade upgrades the PDB time zone file after the actual upgrade. This might take significant time if you have lots of TIMESTAMP WITH TIME ZONE data. If so, you can postpone the time zone file upgrade or perform it in a more time-efficient manner. In multitenant, a PDB can use a different time zone file than the CDB.

-

target_pdb_name: AutoUpgrade renames the PDB. I must specify the original PDB name (SALES) as a suffix to the parameter:

upg1.target_pdb_name.SALES=NEWSALES

If I have multiple PDBs, I can specify target_pdb_name multiple times:

upg1.pdbs=SALES,OLDNAME1,OLDNAME2

upg1.target_pdb_name.SALES=NEWSALES

upg1.target_pdb_name.OLDNAME1=NEWNAME1

upg1.target_pdb_name.OLDNAME2=NEWNAME2

-

before_action / after_action: Extend AutoUpgrade with your own functionality by using scripts before or after the job.

-

Check the documentation for the full list.

Multiple Standby Databases

You must restore the PDB to each standby database.

If you have multiple standby databases, it’s a lot of work for the primary to handle the restore pluggable database command from all standbys.

Imagine we have three standby databases. Here’s an alternative approach:

- First, Standby 1 restores from primary.

- Next, standby 2 restores from standby 1.

- At the same time, standby 3 restores from primary.

- This spreads the load throughout your databases, allowing you to complete faster.