Let me show you how you can migrate an Oracle Database into an Autonomous Database. My source database is a PDB running on 12.1.0.2. I want to migrate it directly into an Oracle Autonomous Transaction Processing (ATP) database. I will do an offline migration using just Oracle Data Pump.

Prerequisites

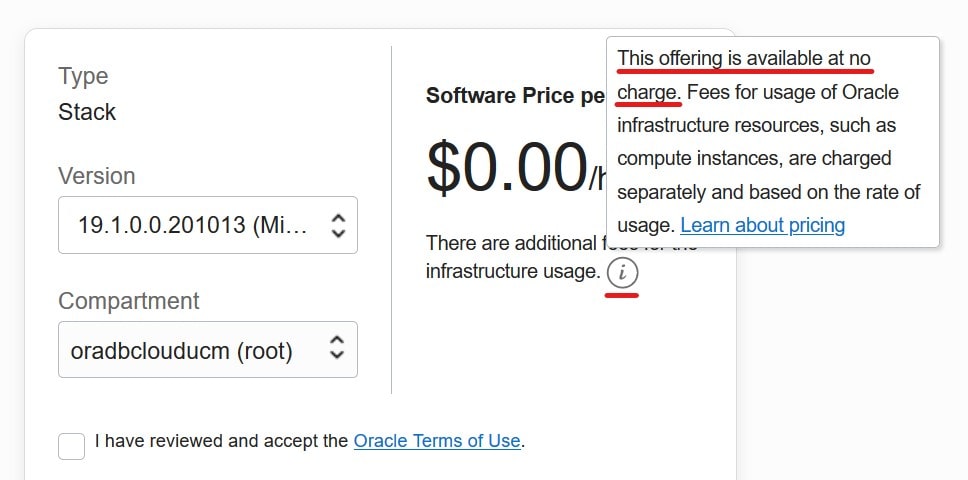

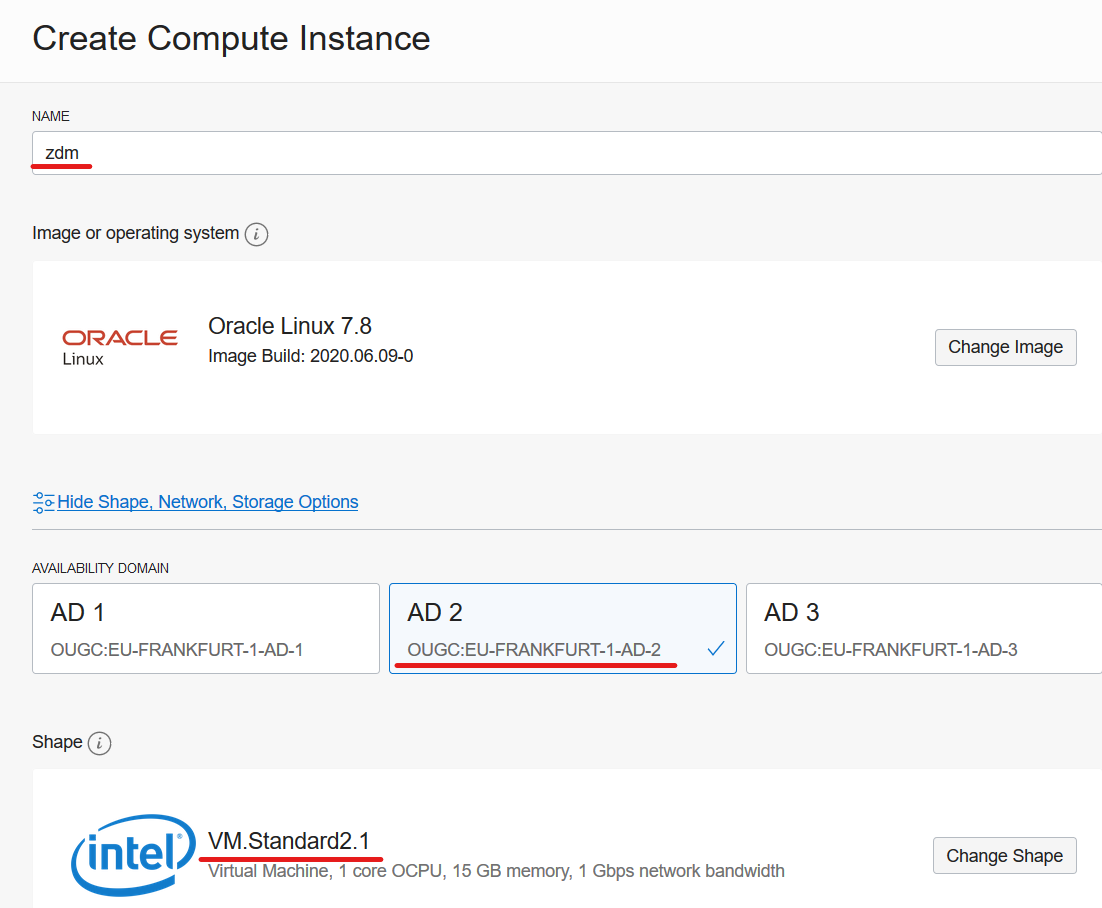

- I need a Zero Downtime Migration (ZDM) service host. I already blogged about how to create such. The computer is called zdmhost and the user is called zdmuser.

- My source database is a PDB called srcpdb, the CDB is called srccdb, and the host is named srchost. All my data is in the schema SH.

- I have created an ATP database. I name it tgtadb.

Prepare Source

My source database is a PDB. If you have a non-CDB perform all the steps in your non-CDB.

First, set streams pool to a reasonable size (needed by Data Pump):

alter session set container=CDB$ROOT;

--At least 256M

alter system set streams_pool_size=256M scope=both;

Generate a list of tablespaces. In ADB there is only one tablespace named DATA (unless you have more than 32 TB of data, then you get DATA_2, DATA_3 and so forth). All tablespaces must be re-mapped to that. Add all schemas to the in-list:

alter session set container=SRCPDB;

select distinct tablespace_name

from (

select distinct tablespace_name from dba_segments where owner in ('SH')

union

select distinct default_tablespace from dba_users where username in ('SH')

union

select distinct tablespace_name from dba_ts_quotas where dropped = 'NO' and username in ('SH')

);

I need to create a directory that can be used by Data Pump:

[oracle@srchost]$ mkdir -p /u01/app/oracle/datapump/mydirsrc

Optional. The best starting point for Data Pump is good and accurate dictionary statistics. This helps Data Pump extract the information as fast as possible:

exec dbms_stats.gather_dictionary_stats;

Prepare ZDM

The ZDM service host must be able to resolve the host name of the source database host:

[root@zdmhost]$ echo "<source IP address> srchost" >> /etc/hosts

I put my private SSH key to the source database host into ~/.ssh directory. Ensure permissions are set properly:

[zdmuser@zdmhost]$ cp srchost_key_file ~/.ssh

[zdmuser@zdmhost]$ chmod 400 ~/.ssh/srchost_key_file

Test the connection. I connect as opc, but you might have a different user. Read more about access to the database host in the documentation:

[zdmuser@zdmhost]$ ssh -i ~/.ssh/srchost_key_file opc@srchost date

Prepare Response File

I will use a template response file as the basis for my response file:

[zdmuser@zdmhost]$ cp $ZDM_HOME/rhp/zdm/template/zdm_logical_template.rsp ~/logical_offline.rsp

[zdmuser@zdmhost]$ chmod 700 ~/logical_offline.rsp

This is the response file that I end up with (see appendix D for an explanation):

MIGRATION_METHOD=OFFLINE_LOGICAL

DATA_TRANSFER_MEDIUM=OSS

SOURCEDATABASE_ADMINUSERNAME=SYSTEM

SOURCEDATABASE_CONNECTIONDETAILS_HOST=srchost

SOURCEDATABASE_CONNECTIONDETAILS_PORT=1521

SOURCEDATABASE_CONNECTIONDETAILS_SERVICENAME=srcpdb....com

TARGETDATABASE_OCID=ocid1.autonomousdatabase.oc1.eu-frankfurt-1....

TARGETDATABASE_ADMINUSERNAME=ADMIN

OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_TENANTID=ocid1.tenancy.oc1....

OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_USERID=ocid1.user.oc1....

OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_FINGERPRINT=58:b9:...

OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_PRIVATEKEYFILE=/home/zdmuser/.oci/oci_api_key.pem

OCIAUTHENTICATIONDETAILS_REGIONID=eu-frankfurt-1

DATAPUMPSETTINGS_JOBMODE=SCHEMA

DATAPUMPSETTINGS_DATAPUMPPARAMETERS_IMPORTPARALLELISMDEGREE=2

DATAPUMPSETTINGS_DATAPUMPPARAMETERS_EXPORTPARALLELISMDEGREE=2

DATAPUMPSETTINGS_DATABUCKET_NAMESPACENAME=oradbclouducm

DATAPUMPSETTINGS_DATABUCKET_BUCKETNAME=zdm-staging

DATAPUMPSETTINGS_EXPORTDIRECTORYOBJECT_NAME=MYDIRSRC

DATAPUMPSETTINGS_EXPORTDIRECTORYOBJECT_PATH=/u01/app/oracle/datapump/mydirsrc

DATAPUMPSETTINGS_CREATEAUTHTOKEN=FALSE

DATAPUMPSETTINGS_METADATAREMAPS-1=type:REMAP_SCHEMA,oldValue:SH,newValue:SH2

DATAPUMPSETTINGS_METADATAREMAPS-2=type:REMAP_TABLESPACE,oldValue:USERS,newValue:DATA

DATAPUMPSETTINGS_METADATAREMAPS-3=type:REMAP_TABLESPACE,oldValue:TEST,newValue:DATA

INCLUDEOBJECTS-1=owner:SH

A few comments:

- I am remapping SH to SH2 because SH already exist in ADB as a common user. I want to import into a new user.

- Check the appendix for recommendations on setting parallel degree. Remember scaling up on CPUs in ADB happens fast and online. If you have a lot of data, you can really benefit from scaling up during import.

- The list of tablespaces that I created earlier is used to generate the list of tablespace remaps.

Perform Evaluation

I am now ready to perform a migration evaluation. It is a dry run of the migration and performs various sanity checks. Nothing is changed during the evaluation:

[zdmuser@zdmhost]$ $ZDM_HOME/bin/zdmcli migrate database \

-rsp /home/zdmuser/logical_offline.rsp \

-sourcenode srchost \

-sourcedb SRCCDB_fra3dd \

-srcauth zdmauth \

-srcarg1 user:opc \

-srcarg2 identity_file:/home/zdmuser/.ssh/srchost_key_file \

-srcarg3 sudo_location:/usr/bin/sudo \

-eval

A few comments:

sourcenodeis the host name of the source database host. The name must be resolvable which I why I put it into /etc/hosts already.sourcedbis the DB_UNIQUE_NAME of the source database.srcarg1is the name of the user that I connect as to the source database host. You might need to change that.srcarg2is the location of the private key file that I use to connect via SSH.

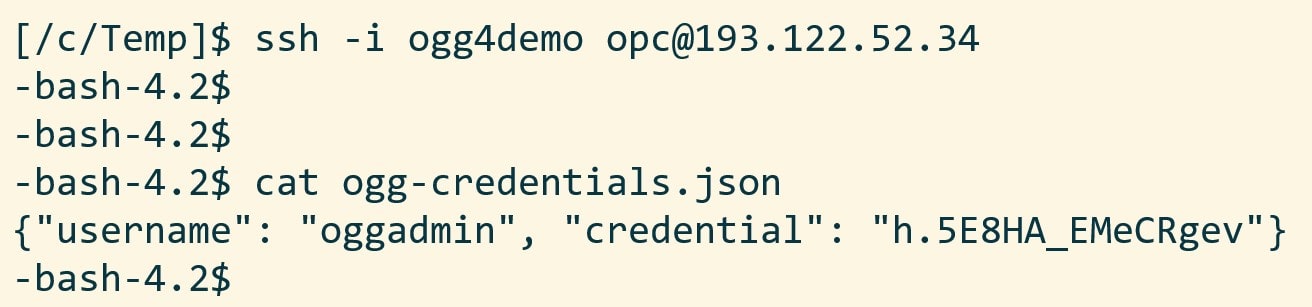

Next, I am prompted for passwords and my auth token. It also prompts for an encryption password. This is used to encrypt and decrypt the Data Pump dump file. Generate a secure password. I also get a job ID.

The migration evaluation is then started, and I can use the job ID to monitor it:

[zdmuser@zdmhost]$ $ZDM_HOME/bin/zdmcli query job -jobid <job ID>

However, I prefer to get more details, so I tail the ZDM log file instead. This little one-liner finds the newest file and tails it:

[zdmuser@zdmhost]$ tail -n 50 -f "`ls -td /u01/app/oracle/chkbase/scheduled/*log | head -1`"

And I get this level of detail:

zdmhost: 2021-05-28T06:25:25.518Z : Executing phase ZDM_PRE_MIGRATION_ADVISOR

zdmhost: 2021-05-28T06:25:31.883Z : Source PDB name : SRCPDB

zdmhost: 2021-05-28T06:25:32.410Z : Running CPAT (Cloud Premigration Advisor Tool) on the source node srchost ...

zdmhost: 2021-05-28T06:25:38.533Z : Premigration advisor output:

Cloud Premigration Advisor Tool Version 21.0.0

Cloud Premigration Advisor Tool completed with overall result: WARNING

Cloud Premigration Advisor Tool generated report location: /u01/app/oracle/zdm/zdm_SRCCDB_fra3dd_6/out/premigration_advisor_report.json

Start Migration

When the evaluation passes, I can start the real migration. This is an offline migration, so I need to drain the database before I start. Kick off the users and ensure that they don’t reconnect.

I am re-using the same command line, but I have removed the -eval option:

[zdmuser@zdmhost]$ $ZDM_HOME/bin/zdmcli migrate database \

-rsp /home/zdmuser/logical_offline.rsp \

-sourcenode srchost \

-sourcedb SRCCDB_fra3dd \

-srcauth zdmauth \

-srcarg1 user:opc \

-srcarg2 identity_file:/home/zdmuser/.ssh/srchost_key_file \

-srcarg3 sudo_location:/usr/bin/sudo

Again, I have to input the passwords as in eval mode. Use the job ID to monitor the progress or tail the log file.

That’s it. I have now migrated into an Autonomous Database in OCI!

I have a few post-migration tasks to carry out:

- I prefer to shut down the source database to ensure that use it anymore.

- Take care of the optimizer statistics as described in this blog post. Since your target is an Autonomous Database I would recommend to not move your statistics preferences, and in addition, simply regather statistics using

DBMS_STATS.GATHER_DATABASE_STATS. - Gather dictionary statistics (

DBMS_STATS.GATHER_DICTIONARY_STATS). After the import the data dictionary is now full of a lot of new data – the metadata about your tables and other objects. To ensure a smooth running database, gather dictionary statistics. - Figure out what to do with the Data Pump dump and log files that are stored in my object storage bucket.

I showed the simple, offline method. However, if you want to use the online method without downtime, have a look at my colleague Sinan’s blog post.

Other Blog Posts in This Series

- Introduction

- Install And Configure ZDM

- Physical Online Migration

- Physical Online Migration to DBCS

- Physical Online Migration to ExaCS

- Physical Online Migration and Testing

- Physical Online Migration of Very Large Databases

- Logical Online Migration

- Logical Online Migration to DBCS

- Logical Offline Migration to Autonomous Database (this post)

- Logical Online Migration and Testing

- Logical Online Migration of Very Large Databases

- Logical Online and Sequences

- Logical Migration and Statistics

- Logical Migration and the Final Touches

- Create GoldenGate Hub

- Monitor GoldenGate Replication

- The Pro Tips

Appendix

A – Update Cloud Premigration Advisor Tool

As part of the migration ZDM will use the Cloud Premigration Advisor Tool (CPAT) to check your source database. The version of CPAT that ships with ZDM is not the latest one. So, you might be missing out on bug fixes and new recommendations. If you want to get the latest and greatest recommendations, I suggest that you manually update the CPAT tool in your ZDM home. You can find instructions in the MOS note Cloud Premigration Advisor Tool (CPAT) Analyzes Databases for Suitability of Cloud Migration (Doc ID 2758371.1)

B – Ignore Certain Data Pump Errors

If there is an error in either your Data Pump export or import, then ZDM will error out. But by default, ZDM will ignore the following errors:

- ORA-31684: Object type string already exists

- ORA-39111: Dependent object type string skipped, base object type string already exists

- ORA-39082 Object type string created with compilation warnings

If you know that your data exports or imports with a specific error and you want to ignore it then you can add your own errors. The list of ignorable errors is maintained by the ZDM service host and it applies to all migrations. So, if you add another error for one migration, that error is also ignored in a second migration. Read more about it in the documentation.

C – RAC Databases

If your source database is a RAC database, then you only need access to one of the nodes. Select the node that you want to use and use the host name and SSH key to that host.

D – Response File

Allow me to put some comments on the values:

| Setting | Comment |

|---|---|

| DATAPUMPSETTINGS_CREATEAUTHTOKEN | I set this to false to re-use an existing auth token. I will be prompted to import the auto token when the migration starts |

| DATAPUMPSETTINGS_DATABUCKET_BUCKETNAME | Name of the bucket in OCI Object Storage that will be used as a staging area. I recommend using a separate and dedicated bucket for each migration |

| DATAPUMPSETTINGS_DATABUCKET_NAMESPACENAME | This is your Object Storage Namespace. You find it in the OCI Console, Administration > Tenancy Details |

| DATAPUMPSETTINGS_DATAPUMPPARAMETERS_EXPORTPARALLELISMDEGREE | For on-prem databases set to number of physical cores x 2. For OCI databases set to number of OCPUs. Standard Edition does not allow parallel, so set to 1 |

| DATAPUMPSETTINGS_DATAPUMPPARAMETERS_IMPORTPARALLELISMDEGREE | Set to the number of OCPUs |

| DATAPUMPSETTINGS_JOBMODE | I recommend using SCHEMA. Each schema to import is specified in a separate parameter, INCLUDEOBJECTS-n |

| DATAPUMPSETTINGS_METADATAREMAPS-n | You should remap any tablespace into DATA which is the only that exist in ADB. If you have a lot of data you will have DATA_2, DATA_3 and so forth. In addition, I am remapping SH into SH2. SH is already created as a common user in ADB, and I want to import into an empty schema. If you need to specify multiple remaps, increment the number and specify only one remap per parameter |

| INCLUDEOBJECTS-n | Specify each schema in a separate parameter, example: INCLUDEOBJECTS-1=owner:SH and INCLUDEOBJECTS-2=owner:OE |

| OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_FINGERPRINT | This is the fingerprint of the API keys that you used to configure OCI CLI on the ZDM service host. You can find the fingerprint in the OCI Console. Go to Identify > Users > User Details > API Keys |

| OCIAUTHENTICATIONDETAILS_USERPRINCIPAL_PRIVATEKEYFILE | This is the private key file that you used when you configured OCI CLI on the ZDM service host |

| SOURCEDATABASE_CONNECTIONDETAILS_SERVICENAME | This is a service that connects directly into the source PDB (or non-CDB). I normally use lsnrctl status to find the name of the service |

| SOURCEDATABASE_ | These settings apply to my source PDB. Or, to your non-CDB |

E – Data Pump Logfile

Check out my Pro Tips for information on how to retrieve the Data Pump log file.

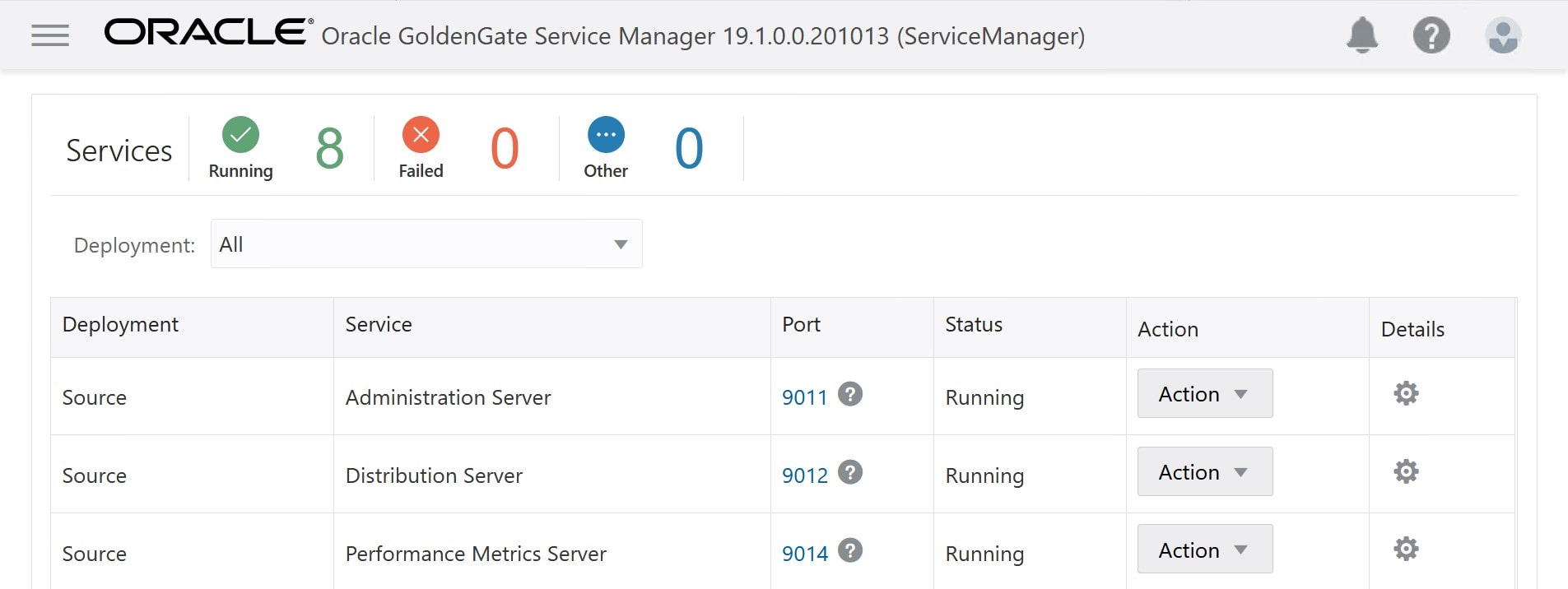

You can start and stop the services from the Action drop-down menu.

You can start and stop the services from the Action drop-down menu.

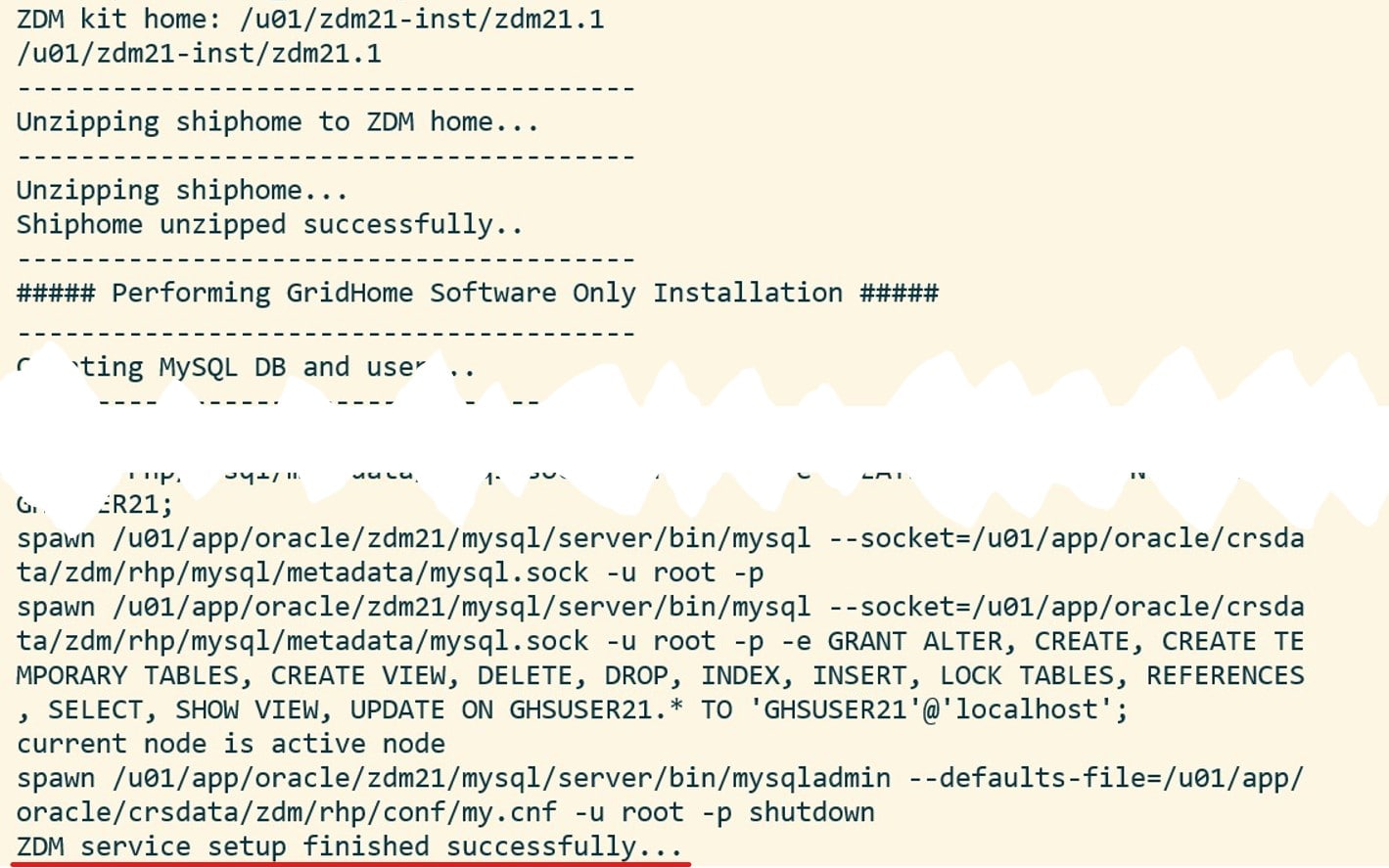

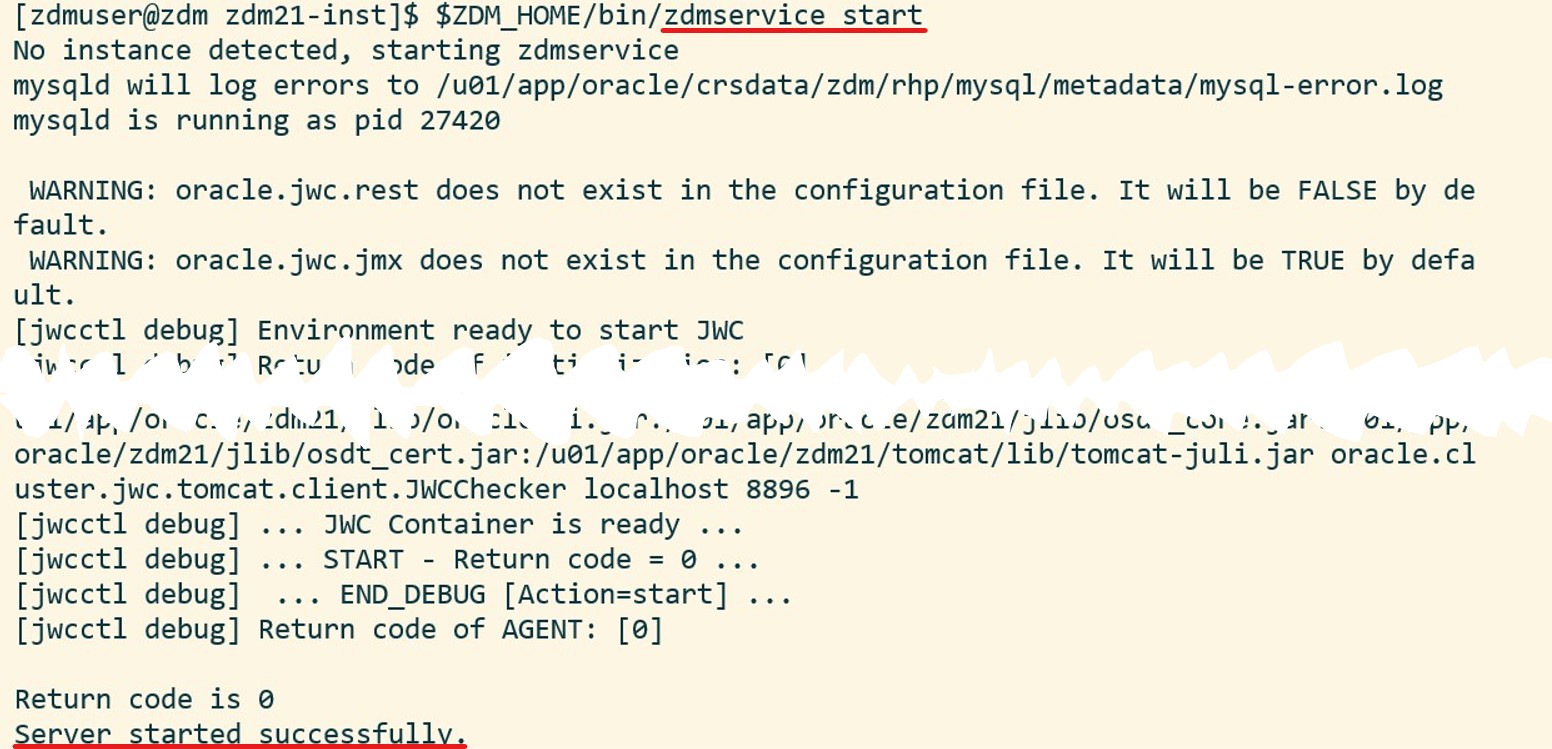

And, optionally, I can verify the status of the ZDM service:

And, optionally, I can verify the status of the ZDM service: