Often when you migrate an Oracle Database using cross-platform transportable tablespace (XTTS) and incremental backups, it is a big database. Big databases must often be protected by Data Guard.

How do you ensure that Data Guard protects the target database at the end of the migration?

Build After Migration

A simple solution is to build the standby database after the migration. There are some downsides to this approach:

- It takes time. In many situations, the business requires that the database can’t go live until a standby database is in place. Having to build a standby database will prolong the downtime.

- It puts a load on the primary database. Right after the migration, you don’t have your backups in place yet, so you will need to build the standby databases directly from the primary database. That requires a lot of I/O and network traffic. You might want to use those resources for other activities, like taking a level 0 backup or regathering statistics.

- It can become even more complicated if you migrate into multitenant architecture. Rebuilding the standby database of an already running, active CDB might not be an option. The other PDBs in the CDB are compromised while the standby is rebuilt.

Restore Data Files to Standby Host

Here is an approach that offers much less downtime. Restore the data files onto the standby host as well as part of the migration. During the Data Pump import, the plug-in propagates to the standby database via redo apply.

When migrating with XTTS and the Perl script you are familiar with running the backup on the source database (xttdriver.pl --backup). Also, you know how to restore and recover the data files on the target system (xttdriver.pl --restore).

Now, the idea is you restore and recover the data files on the primary host and also on the standby host. During the Data Pump import, the data files are plugged into the primary database. The plug-in commands are propagated to the standby database via redo apply. Then, the standby database can plug-in the data files if they are located in the same place as on the primary database. The rest of the Data Pump import will propagate as well, and in the end you will have a working Data Guard environment.

You must recover the data files on the primary and standby database to the exact same SCN. Be sure to restore all backups to both the primary and standby database.

How To

The following assumes that your target databases are using ASM. Also, I am migrating directly into a PDB. But the procedure is similar for migrations into a non-CDB database. Use the procedure described in my previous blog post or MOS note V4 Reduce Transportable Tablespace Downtime using Cross Platform Incremental Backup (Doc ID 2471245.1). Adjust the procedure according to the following.

- Prepare the target database and build a standby database before starting the migration.

- In xtt.properties set

destconnstrto point to the target primary database. - Copy xtt.properties to the target primary host and target standby host.

- On target standby host, change xtt.properties and set

destconnstrto point to the target standby database. - Create the cross-platform backups on the source database using

xttdriver.pl --backupin the usual way - Now copy the file res.txt to both the target primary and target standby database.

- The backups in the scratch location should also be available to both the target primary and standby databases.

- Now restore or recover the backups on the target primary and target standby database using

xttdriver.pl --restore. - Repeat the backup/restore/recover process as many times as needed. Keep recovering the target primary and target standby database. It is very important that the data files on both the target primary and target standby database are recovered to the exact same SCN.

- On the target databases the data files will be restored into ASM. The data file itself is created with an OMF file name:

It could be something like this:+<disk_group>/<db_unique_name>/<source_guid>/DATAFILE/<omf_name>+DATA/CDB1_FRA356/86D5DC2587337002E0532AB2A8C0A57C/DATAFILE/ACCOUNT.281.1099469863- The disk group is what you specified in xtt.properties in

dest_datafile_location - DB_UNIQUE_NAME is not the same on the primary and standby database. It will differ.

- To comply with OMF standard the database must restore the data files into a folder corresponding to the PDB it belongs to. However, currently, the data files do not belong to a PDB. We haven’t done the Data Pump import plug-in operation yet. The database will create a folder with the GUID of the source database. If you are interested, you can get the GUID from the source database using

select guid from v$containers. - The last part of the OMF file name is the tablespace name, and some numbers representing the file ID and a number to ensure uniqueness. This part will differ on the primary and standby database as well.

- The disk group is what you specified in xtt.properties in

- We now know that the data file name is different on the primary and standby database. Previously, it was stated that it is important that the data files are stored in the same location and has the same name. This is a problem! But the Perl script solves that by creating ASM aliases.

- The aliases will be created in the location specified by

dest_datafile_locationin xtt.properties. Useasmcmdto verify it. The column SYS (System-generated) is N, meaning this is not a proper OMF file. Also, we can see in the Name column that it is an alias:ASMCMD> ls -l +DATA Type Redund Striped Time Sys Name DATAFILE UNPROT COARSE MAR 16 08:00:00 N account_25.dbf => +DATA/CDB1_FRA2KR/86D5DC2587337002E0532AB2A8C0A57C/DATAFILE/ACCOUNT.282.1099469855 DATAFILE UNPROT COARSE MAR 16 08:00:00 N accountidx_26.dbf => +DATA/CDB1_FRA2KR/86D5DC2587337002E0532AB2A8C0A57C/DATAFILE/ACCOUNTIDX.280.1099469855 ... - If you look at the directory where the alias is pointing to, you can see that the files are proper OMF files – real data files. Column SYS is Y, and Name does not contain the alias pointer

=>:ASMCMD> cd +DATA/CDB1_FRA2KR/86D5DC2587337002E0532AB2A8C0A57C/DATAFILE ASMCMD> ls -l Type Redund Striped Time Sys Name DATAFILE UNPROT COARSE MAR 16 08:00:00 Y ACCOUNT.282.1099469855 DATAFILE UNPROT COARSE MAR 16 08:00:00 Y ACCOUNTIDX.280.1099469855 ... - Thus, the aliases are hiding the fact that the data files have different name on the target primary and target standby database.

- When you prepare the parameter file for the Data Pump import, be sure to reference the aliases – not the OMF named data files. The aliases have the same name on both the target primary and target standby database:

$ cat import.par transport_datafiles=+DATA/account_25.dbf transport_datafiles=+DATA/accountidx_26.dbf transport_datafiles=... - Then start the Data Pump import. The data files are plugged into the primary database during DATABASE_EXPORT/PLUGTS_FULL/PLUGTS_BLK. When the redo containing that information is applied on the standby database, the standby database will plug in the data files as well.

- When the Data Pump import completes, you can verify that the standby database survived and is still applying redo. A switch-over is also a good way of testing it.

Conclusion

You can prepare the target standby database in advance. This enables the target database to be protected by Data Guard as soon as the Data Pump import completes.

Restore and recover the data files to the target primary and target standby database. If they are recovered to the exact same SCN the plug-in of the data files propagates to the standby database via redo apply. After the Data Pump import, your target database has a fully functional standby database.

Further Reading

Other Blog Posts in This Series

- Introduction

- Prerequisites

- Full Transportable Export/Import

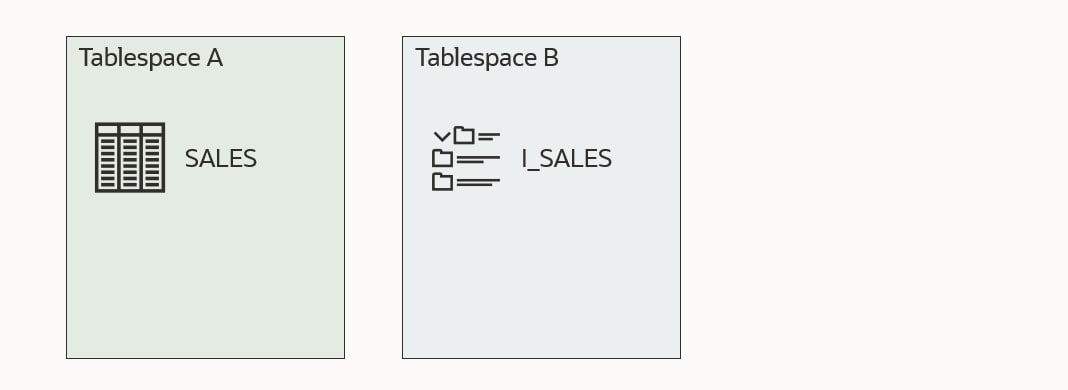

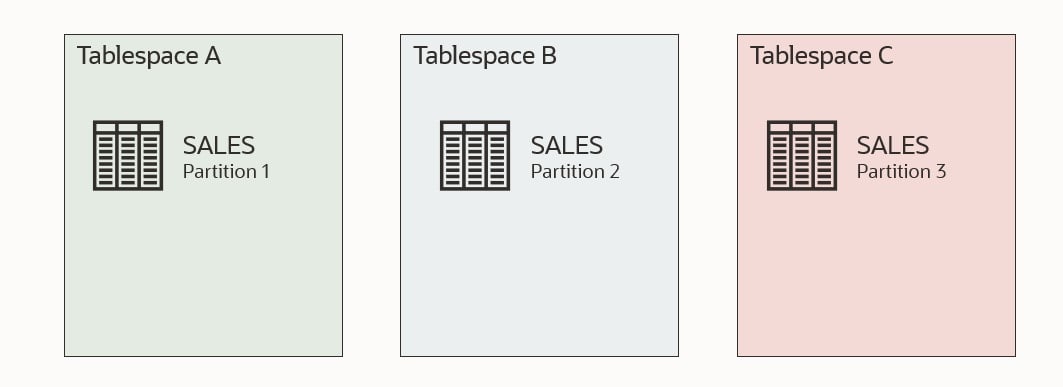

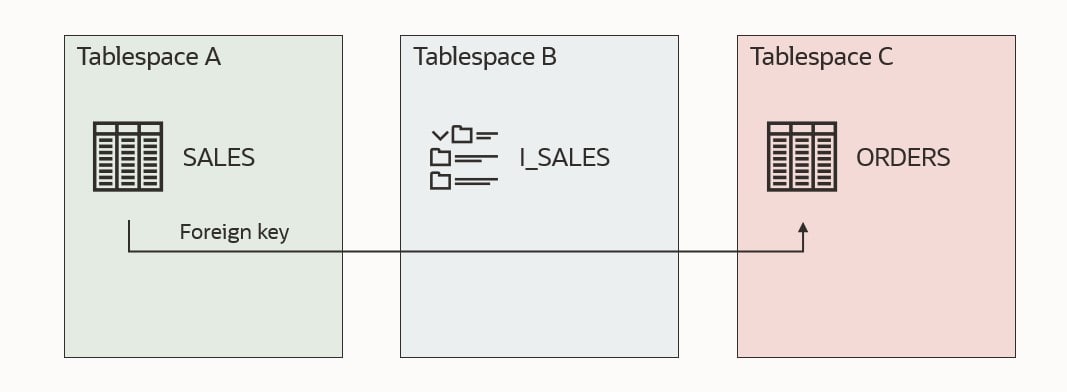

- What Is a Self-contained Transportable Tablespace Set

- How to Migrate a Database Using Full Transportable Export/Import and Incremental Backups

- Make It Fast

- Backup on Standby Database

- Slow Network Between Source and Target

- Bigfile Tablespaces

- Testing the Procedure On Activated Standby Database

- Testing the Procedure Multiple Times Using Read-Only Tablespaces

- Target Database and Data Guard

- ASM Aliases and Why You Should Get Rid of Them

- How to use Transportable Tablespace with TDE Tablespace Encryption

- Pro Tips